update

This commit is contained in:

198

README.md

Normal file

198

README.md

Normal file

@@ -0,0 +1,198 @@

|

||||

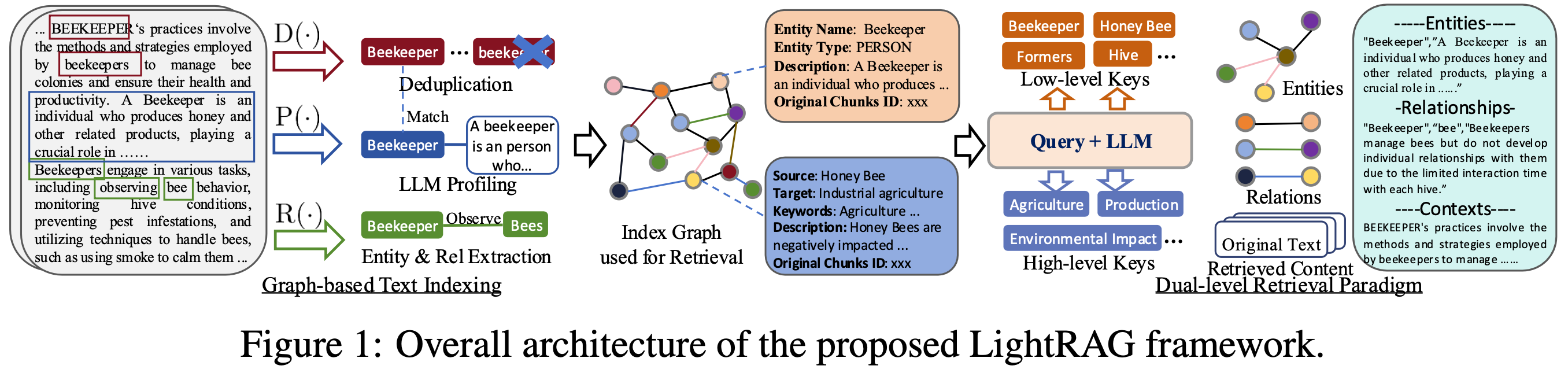

# LightRAG: Simple and Fast Retrieval-Augmented Generation

|

||||

|

||||

|

||||

|

||||

|

||||

<a href='https://github.com/HKUDS/LightRAG'><img src='https://img.shields.io/badge/Project-Page-Green'></a>

|

||||

<a href='https://arxiv.org/abs/2410.05779'><img src='https://img.shields.io/badge/arXiv-2410.05779-b31b1b'></a>

|

||||

|

||||

This repository hosts the code of LightRAG. The structure of this code is based on [nano-graphrag](https://github.com/gusye1234/nano-graphrag).

|

||||

|

||||

## Install

|

||||

|

||||

* Install from source

|

||||

|

||||

```bash

|

||||

cd LightRAG

|

||||

pip install -e .

|

||||

```

|

||||

* Install from PyPI

|

||||

```bash

|

||||

pip install lightrag-hku

|

||||

```

|

||||

|

||||

## Quick Start

|

||||

|

||||

* Set OpenAI API key in environment: `export OPENAI_API_KEY="sk-...".`

|

||||

* Download the demo text "A Christmas Carol by Charles Dickens"

|

||||

```bash

|

||||

curl https://raw.githubusercontent.com/gusye1234/nano-graphrag/main/tests/mock_data.txt > ./book.txt

|

||||

```

|

||||

Use the below python snippet:

|

||||

|

||||

```python

|

||||

from lightrag import LightRAG, QueryParam

|

||||

|

||||

rag = LightRAG(working_dir="./dickens")

|

||||

|

||||

with open("./book.txt") as f:

|

||||

rag.insert(f.read())

|

||||

|

||||

# Perform naive search

|

||||

print(rag.query("What are the top themes in this story?", param=QueryParam(mode="naive")))

|

||||

|

||||

# Perform local search

|

||||

print(rag.query("What are the top themes in this story?", param=QueryParam(mode="local")))

|

||||

|

||||

# Perform global search

|

||||

print(rag.query("What are the top themes in this story?", param=QueryParam(mode="global")))

|

||||

|

||||

# Perform hybird search

|

||||

print(rag.query("What are the top themes in this story?", param=QueryParam(mode="hybird")))

|

||||

```

|

||||

Batch Insert

|

||||

```python

|

||||

rag.insert(["TEXT1", "TEXT2",...])

|

||||

```

|

||||

Incremental Insert

|

||||

|

||||

```python

|

||||

rag = LightRAG(working_dir="./dickens")

|

||||

|

||||

with open("./newText.txt") as f:

|

||||

rag.insert(f.read())

|

||||

```

|

||||

## Evaluation

|

||||

### Dataset

|

||||

The dataset used in LightRAG can be download from [TommyChien/UltraDomain](https://huggingface.co/datasets/TommyChien/UltraDomain).

|

||||

|

||||

### Generate Query

|

||||

LightRAG uses the following prompt to generate high-level queries, with the corresponding code located in `example/generate_query.py`.

|

||||

```python

|

||||

Given the following description of a dataset:

|

||||

|

||||

{description}

|

||||

|

||||

Please identify 5 potential users who would engage with this dataset. For each user, list 5 tasks they would perform with this dataset. Then, for each (user, task) combination, generate 5 questions that require a high-level understanding of the entire dataset.

|

||||

|

||||

Output the results in the following structure:

|

||||

- User 1: [user description]

|

||||

- Task 1: [task description]

|

||||

- Question 1:

|

||||

- Question 2:

|

||||

- Question 3:

|

||||

- Question 4:

|

||||

- Question 5:

|

||||

- Task 2: [task description]

|

||||

...

|

||||

- Task 5: [task description]

|

||||

- User 2: [user description]

|

||||

...

|

||||

- User 5: [user description]

|

||||

...

|

||||

```

|

||||

|

||||

### Batch Eval

|

||||

To evaluate the performance of two RAG systems on high-level queries, LightRAG uses the following prompt, with the specific code available in `example/batch_eval.py`.

|

||||

```python

|

||||

---Role---

|

||||

You are an expert tasked with evaluating two answers to the same question based on three criteria: **Comprehensiveness**, **Diversity**, and **Empowerment**.

|

||||

---Goal---

|

||||

You will evaluate two answers to the same question based on three criteria: **Comprehensiveness**, **Diversity**, and **Empowerment**.

|

||||

|

||||

- **Comprehensiveness**: How much detail does the answer provide to cover all aspects and details of the question?

|

||||

- **Diversity**: How varied and rich is the answer in providing different perspectives and insights on the question?

|

||||

- **Empowerment**: How well does the answer help the reader understand and make informed judgments about the topic?

|

||||

|

||||

For each criterion, choose the better answer (either Answer 1 or Answer 2) and explain why. Then, select an overall winner based on these three categories.

|

||||

|

||||

Here is the question:

|

||||

{query}

|

||||

|

||||

Here are the two answers:

|

||||

|

||||

**Answer 1:**

|

||||

{answer1}

|

||||

|

||||

**Answer 2:**

|

||||

{answer2}

|

||||

|

||||

Evaluate both answers using the three criteria listed above and provide detailed explanations for each criterion.

|

||||

|

||||

Output your evaluation in the following JSON format:

|

||||

|

||||

{{

|

||||

"Comprehensiveness": {{

|

||||

"Winner": "[Answer 1 or Answer 2]",

|

||||

"Explanation": "[Provide explanation here]"

|

||||

}},

|

||||

"Empowerment": {{

|

||||

"Winner": "[Answer 1 or Answer 2]",

|

||||

"Explanation": "[Provide explanation here]"

|

||||

}},

|

||||

"Overall Winner": {{

|

||||

"Winner": "[Answer 1 or Answer 2]",

|

||||

"Explanation": "[Summarize why this answer is the overall winner based on the three criteria]"

|

||||

}}

|

||||

}}

|

||||

```

|

||||

### Overall Performance Table

|

||||

### Overall Performance Table

|

||||

| | **Agriculture** | | **CS** | | **Legal** | | **Mix** | |

|

||||

|----------------------|-------------------------|-----------------------|-----------------------|-----------------------|-----------------------|-----------------------|-----------------------|-----------------------|

|

||||

| | NaiveRAG | **LightRAG** | NaiveRAG | **LightRAG** | NaiveRAG | **LightRAG** | NaiveRAG | **LightRAG** |

|

||||

| **Comprehensiveness** | 32.69% | **67.31%** | 35.44% | **64.56%** | 19.05% | **80.95%** | 36.36% | **63.64%** |

|

||||

| **Diversity** | 24.09% | **75.91%** | 35.24% | **64.76%** | 10.98% | **89.02%** | 30.76% | **69.24%** |

|

||||

| **Empowerment** | 31.35% | **68.65%** | 35.48% | **64.52%** | 17.59% | **82.41%** | 40.95% | **59.05%** |

|

||||

| **Overall** | 33.30% | **66.70%** | 34.76% | **65.24%** | 17.46% | **82.54%** | 37.59% | **62.40%** |

|

||||

| | RQ-RAG | **LightRAG** | RQ-RAG | **LightRAG** | RQ-RAG | **LightRAG** | RQ-RAG | **LightRAG** |

|

||||

| **Comprehensiveness** | 32.05% | **67.95%** | 39.30% | **60.70%** | 18.57% | **81.43%** | 38.89% | **61.11%** |

|

||||

| **Diversity** | 29.44% | **70.56%** | 38.71% | **61.29%** | 15.14% | **84.86%** | 28.50% | **71.50%** |

|

||||

| **Empowerment** | 32.51% | **67.49%** | 37.52% | **62.48%** | 17.80% | **82.20%** | 43.96% | **56.04%** |

|

||||

| **Overall** | 33.29% | **66.71%** | 39.03% | **60.97%** | 17.80% | **82.20%** | 39.61% | **60.39%** |

|

||||

| | HyDE | **LightRAG** | HyDE | **LightRAG** | HyDE | **LightRAG** | HyDE | **LightRAG** |

|

||||

| **Comprehensiveness** | 24.39% | **75.61%** | 36.49% | **63.51%** | 27.68% | **72.32%** | 42.17% | **57.83%** |

|

||||

| **Diversity** | 24.96% | **75.34%** | 37.41% | **62.59%** | 18.79% | **81.21%** | 30.88% | **69.12%** |

|

||||

| **Empowerment** | 24.89% | **75.11%** | 34.99% | **65.01%** | 26.99% | **73.01%** | **45.61%** | **54.39%** |

|

||||

| **Overall** | 23.17% | **76.83%** | 35.67% | **64.33%** | 27.68% | **72.32%** | 42.72% | **57.28%** |

|

||||

| | GraphRAG | **LightRAG** | GraphRAG | **LightRAG** | GraphRAG | **LightRAG** | GraphRAG | **LightRAG** |

|

||||

| **Comprehensiveness** | 45.56% | **54.44%** | 45.98% | **54.02%** | 47.13% | **52.87%** | **51.86%** | 48.14% |

|

||||

| **Diversity** | 19.65% | **80.35%** | 39.64% | **60.36%** | 25.55% | **74.45%** | 35.87% | **64.13%** |

|

||||

| **Empowerment** | 36.69% | **63.31%** | 45.09% | **54.91%** | 42.81% | **57.19%** | **52.94%** | 47.06% |

|

||||

| **Overall** | 43.62% | **56.38%** | 45.98% | **54.02%** | 45.70% | **54.30%** | **51.86%** | 48.14% |

|

||||

|

||||

## Code Structure

|

||||

|

||||

```python

|

||||

.

|

||||

├── examples

|

||||

│ ├── batch_eval.py

|

||||

│ ├── generate_query.py

|

||||

│ ├── insert.py

|

||||

│ └── query.py

|

||||

├── lightrag

|

||||

│ ├── __init__.py

|

||||

│ ├── base.py

|

||||

│ ├── lightrag.py

|

||||

│ ├── llm.py

|

||||

│ ├── operate.py

|

||||

│ ├── prompt.py

|

||||

│ ├── storage.py

|

||||

│ └── utils.jpeg

|

||||

├── LICENSE

|

||||

├── README.md

|

||||

├── requirements.txt

|

||||

└── setup.py

|

||||

```

|

||||

## Citation

|

||||

|

||||

```

|

||||

@article{guo2024lightrag,

|

||||

title={LightRAG: Simple and Fast Retrieval-Augmented Generation},

|

||||

author={Zirui Guo and Lianghao Xia and Yanhua Yu and Tu Ao and Chao Huang},

|

||||

year={2024},

|

||||

eprint={2410.05779},

|

||||

archivePrefix={arXiv},

|

||||

primaryClass={cs.IR}

|

||||

}

|

||||

```

|

||||

5

lightrag/__init__.py

Normal file

5

lightrag/__init__.py

Normal file

@@ -0,0 +1,5 @@

|

||||

from .lightrag import LightRAG, QueryParam

|

||||

|

||||

__version__ = "0.0.2"

|

||||

__author__ = "Zirui Guo"

|

||||

__url__ = "https://github.com/HKUDS/GraphEdit"

|

||||

116

lightrag/base.py

Normal file

116

lightrag/base.py

Normal file

@@ -0,0 +1,116 @@

|

||||

from dataclasses import dataclass, field

|

||||

from typing import TypedDict, Union, Literal, Generic, TypeVar

|

||||

|

||||

import numpy as np

|

||||

|

||||

from .utils import EmbeddingFunc

|

||||

|

||||

TextChunkSchema = TypedDict(

|

||||

"TextChunkSchema",

|

||||

{"tokens": int, "content": str, "full_doc_id": str, "chunk_order_index": int},

|

||||

)

|

||||

|

||||

T = TypeVar("T")

|

||||

|

||||

@dataclass

|

||||

class QueryParam:

|

||||

mode: Literal["local", "global", "hybird", "naive"] = "global"

|

||||

only_need_context: bool = False

|

||||

response_type: str = "Multiple Paragraphs"

|

||||

top_k: int = 60

|

||||

max_token_for_text_unit: int = 4000

|

||||

max_token_for_global_context: int = 4000

|

||||

max_token_for_local_context: int = 4000

|

||||

|

||||

|

||||

@dataclass

|

||||

class StorageNameSpace:

|

||||

namespace: str

|

||||

global_config: dict

|

||||

|

||||

async def index_done_callback(self):

|

||||

"""commit the storage operations after indexing"""

|

||||

pass

|

||||

|

||||

async def query_done_callback(self):

|

||||

"""commit the storage operations after querying"""

|

||||

pass

|

||||

|

||||

@dataclass

|

||||

class BaseVectorStorage(StorageNameSpace):

|

||||

embedding_func: EmbeddingFunc

|

||||

meta_fields: set = field(default_factory=set)

|

||||

|

||||

async def query(self, query: str, top_k: int) -> list[dict]:

|

||||

raise NotImplementedError

|

||||

|

||||

async def upsert(self, data: dict[str, dict]):

|

||||

"""Use 'content' field from value for embedding, use key as id.

|

||||

If embedding_func is None, use 'embedding' field from value

|

||||

"""

|

||||

raise NotImplementedError

|

||||

|

||||

@dataclass

|

||||

class BaseKVStorage(Generic[T], StorageNameSpace):

|

||||

async def all_keys(self) -> list[str]:

|

||||

raise NotImplementedError

|

||||

|

||||

async def get_by_id(self, id: str) -> Union[T, None]:

|

||||

raise NotImplementedError

|

||||

|

||||

async def get_by_ids(

|

||||

self, ids: list[str], fields: Union[set[str], None] = None

|

||||

) -> list[Union[T, None]]:

|

||||

raise NotImplementedError

|

||||

|

||||

async def filter_keys(self, data: list[str]) -> set[str]:

|

||||

"""return un-exist keys"""

|

||||

raise NotImplementedError

|

||||

|

||||

async def upsert(self, data: dict[str, T]):

|

||||

raise NotImplementedError

|

||||

|

||||

async def drop(self):

|

||||

raise NotImplementedError

|

||||

|

||||

|

||||

@dataclass

|

||||

class BaseGraphStorage(StorageNameSpace):

|

||||

async def has_node(self, node_id: str) -> bool:

|

||||

raise NotImplementedError

|

||||

|

||||

async def has_edge(self, source_node_id: str, target_node_id: str) -> bool:

|

||||

raise NotImplementedError

|

||||

|

||||

async def node_degree(self, node_id: str) -> int:

|

||||

raise NotImplementedError

|

||||

|

||||

async def edge_degree(self, src_id: str, tgt_id: str) -> int:

|

||||

raise NotImplementedError

|

||||

|

||||

async def get_node(self, node_id: str) -> Union[dict, None]:

|

||||

raise NotImplementedError

|

||||

|

||||

async def get_edge(

|

||||

self, source_node_id: str, target_node_id: str

|

||||

) -> Union[dict, None]:

|

||||

raise NotImplementedError

|

||||

|

||||

async def get_node_edges(

|

||||

self, source_node_id: str

|

||||

) -> Union[list[tuple[str, str]], None]:

|

||||

raise NotImplementedError

|

||||

|

||||

async def upsert_node(self, node_id: str, node_data: dict[str, str]):

|

||||

raise NotImplementedError

|

||||

|

||||

async def upsert_edge(

|

||||

self, source_node_id: str, target_node_id: str, edge_data: dict[str, str]

|

||||

):

|

||||

raise NotImplementedError

|

||||

|

||||

async def clustering(self, algorithm: str):

|

||||

raise NotImplementedError

|

||||

|

||||

async def embed_nodes(self, algorithm: str) -> tuple[np.ndarray, list[str]]:

|

||||

raise NotImplementedError("Node embedding is not used in lightrag.")

|

||||

300

lightrag/lightrag.py

Normal file

300

lightrag/lightrag.py

Normal file

@@ -0,0 +1,300 @@

|

||||

import asyncio

|

||||

import os

|

||||

from dataclasses import asdict, dataclass, field

|

||||

from datetime import datetime

|

||||

from functools import partial

|

||||

from typing import Type, cast

|

||||

|

||||

from .llm import gpt_4o_complete, gpt_4o_mini_complete, openai_embedding

|

||||

from .operate import (

|

||||

chunking_by_token_size,

|

||||

extract_entities,

|

||||

local_query,

|

||||

global_query,

|

||||

hybird_query,

|

||||

naive_query,

|

||||

)

|

||||

|

||||

from .storage import (

|

||||

JsonKVStorage,

|

||||

NanoVectorDBStorage,

|

||||

NetworkXStorage,

|

||||

)

|

||||

from .utils import (

|

||||

EmbeddingFunc,

|

||||

compute_mdhash_id,

|

||||

limit_async_func_call,

|

||||

convert_response_to_json,

|

||||

logger,

|

||||

set_logger,

|

||||

)

|

||||

from .base import (

|

||||

BaseGraphStorage,

|

||||

BaseKVStorage,

|

||||

BaseVectorStorage,

|

||||

StorageNameSpace,

|

||||

QueryParam,

|

||||

)

|

||||

|

||||

def always_get_an_event_loop() -> asyncio.AbstractEventLoop:

|

||||

try:

|

||||

# If there is already an event loop, use it.

|

||||

loop = asyncio.get_event_loop()

|

||||

except RuntimeError:

|

||||

# If in a sub-thread, create a new event loop.

|

||||

logger.info("Creating a new event loop in a sub-thread.")

|

||||

loop = asyncio.new_event_loop()

|

||||

asyncio.set_event_loop(loop)

|

||||

return loop

|

||||

|

||||

@dataclass

|

||||

class LightRAG:

|

||||

working_dir: str = field(

|

||||

default_factory=lambda: f"./lightrag_cache_{datetime.now().strftime('%Y-%m-%d-%H:%M:%S')}"

|

||||

)

|

||||

|

||||

# text chunking

|

||||

chunk_token_size: int = 1200

|

||||

chunk_overlap_token_size: int = 100

|

||||

tiktoken_model_name: str = "gpt-4o-mini"

|

||||

|

||||

# entity extraction

|

||||

entity_extract_max_gleaning: int = 1

|

||||

entity_summary_to_max_tokens: int = 500

|

||||

|

||||

# node embedding

|

||||

node_embedding_algorithm: str = "node2vec"

|

||||

node2vec_params: dict = field(

|

||||

default_factory=lambda: {

|

||||

"dimensions": 1536,

|

||||

"num_walks": 10,

|

||||

"walk_length": 40,

|

||||

"num_walks": 10,

|

||||

"window_size": 2,

|

||||

"iterations": 3,

|

||||

"random_seed": 3,

|

||||

}

|

||||

)

|

||||

|

||||

# text embedding

|

||||

embedding_func: EmbeddingFunc = field(default_factory=lambda: openai_embedding)

|

||||

embedding_batch_num: int = 32

|

||||

embedding_func_max_async: int = 16

|

||||

|

||||

# LLM

|

||||

llm_model_func: callable = gpt_4o_mini_complete

|

||||

llm_model_max_token_size: int = 32768

|

||||

llm_model_max_async: int = 16

|

||||

|

||||

# storage

|

||||

key_string_value_json_storage_cls: Type[BaseKVStorage] = JsonKVStorage

|

||||

vector_db_storage_cls: Type[BaseVectorStorage] = NanoVectorDBStorage

|

||||

vector_db_storage_cls_kwargs: dict = field(default_factory=dict)

|

||||

graph_storage_cls: Type[BaseGraphStorage] = NetworkXStorage

|

||||

enable_llm_cache: bool = True

|

||||

|

||||

# extension

|

||||

addon_params: dict = field(default_factory=dict)

|

||||

convert_response_to_json_func: callable = convert_response_to_json

|

||||

|

||||

def __post_init__(self):

|

||||

log_file = os.path.join(self.working_dir, "lightrag.log")

|

||||

set_logger(log_file)

|

||||

logger.info(f"Logger initialized for working directory: {self.working_dir}")

|

||||

|

||||

_print_config = ",\n ".join([f"{k} = {v}" for k, v in asdict(self).items()])

|

||||

logger.debug(f"LightRAG init with param:\n {_print_config}\n")

|

||||

|

||||

if not os.path.exists(self.working_dir):

|

||||

logger.info(f"Creating working directory {self.working_dir}")

|

||||

os.makedirs(self.working_dir)

|

||||

|

||||

self.full_docs = self.key_string_value_json_storage_cls(

|

||||

namespace="full_docs", global_config=asdict(self)

|

||||

)

|

||||

|

||||

self.text_chunks = self.key_string_value_json_storage_cls(

|

||||

namespace="text_chunks", global_config=asdict(self)

|

||||

)

|

||||

|

||||

self.llm_response_cache = (

|

||||

self.key_string_value_json_storage_cls(

|

||||

namespace="llm_response_cache", global_config=asdict(self)

|

||||

)

|

||||

if self.enable_llm_cache

|

||||

else None

|

||||

)

|

||||

self.chunk_entity_relation_graph = self.graph_storage_cls(

|

||||

namespace="chunk_entity_relation", global_config=asdict(self)

|

||||

)

|

||||

self.embedding_func = limit_async_func_call(self.embedding_func_max_async)(

|

||||

self.embedding_func

|

||||

)

|

||||

self.entities_vdb = (

|

||||

self.vector_db_storage_cls(

|

||||

namespace="entities",

|

||||

global_config=asdict(self),

|

||||

embedding_func=self.embedding_func,

|

||||

meta_fields={"entity_name"}

|

||||

)

|

||||

)

|

||||

self.relationships_vdb = (

|

||||

self.vector_db_storage_cls(

|

||||

namespace="relationships",

|

||||

global_config=asdict(self),

|

||||

embedding_func=self.embedding_func,

|

||||

meta_fields={"src_id", "tgt_id"}

|

||||

)

|

||||

)

|

||||

self.chunks_vdb = (

|

||||

self.vector_db_storage_cls(

|

||||

namespace="chunks",

|

||||

global_config=asdict(self),

|

||||

embedding_func=self.embedding_func,

|

||||

)

|

||||

)

|

||||

|

||||

self.llm_model_func = limit_async_func_call(self.llm_model_max_async)(

|

||||

partial(self.llm_model_func, hashing_kv=self.llm_response_cache)

|

||||

)

|

||||

|

||||

def insert(self, string_or_strings):

|

||||

loop = always_get_an_event_loop()

|

||||

return loop.run_until_complete(self.ainsert(string_or_strings))

|

||||

|

||||

async def ainsert(self, string_or_strings):

|

||||

try:

|

||||

if isinstance(string_or_strings, str):

|

||||

string_or_strings = [string_or_strings]

|

||||

|

||||

new_docs = {

|

||||

compute_mdhash_id(c.strip(), prefix="doc-"): {"content": c.strip()}

|

||||

for c in string_or_strings

|

||||

}

|

||||

_add_doc_keys = await self.full_docs.filter_keys(list(new_docs.keys()))

|

||||

new_docs = {k: v for k, v in new_docs.items() if k in _add_doc_keys}

|

||||

if not len(new_docs):

|

||||

logger.warning(f"All docs are already in the storage")

|

||||

return

|

||||

logger.info(f"[New Docs] inserting {len(new_docs)} docs")

|

||||

|

||||

inserting_chunks = {}

|

||||

for doc_key, doc in new_docs.items():

|

||||

chunks = {

|

||||

compute_mdhash_id(dp["content"], prefix="chunk-"): {

|

||||

**dp,

|

||||

"full_doc_id": doc_key,

|

||||

}

|

||||

for dp in chunking_by_token_size(

|

||||

doc["content"],

|

||||

overlap_token_size=self.chunk_overlap_token_size,

|

||||

max_token_size=self.chunk_token_size,

|

||||

tiktoken_model=self.tiktoken_model_name,

|

||||

)

|

||||

}

|

||||

inserting_chunks.update(chunks)

|

||||

_add_chunk_keys = await self.text_chunks.filter_keys(

|

||||

list(inserting_chunks.keys())

|

||||

)

|

||||

inserting_chunks = {

|

||||

k: v for k, v in inserting_chunks.items() if k in _add_chunk_keys

|

||||

}

|

||||

if not len(inserting_chunks):

|

||||

logger.warning(f"All chunks are already in the storage")

|

||||

return

|

||||

logger.info(f"[New Chunks] inserting {len(inserting_chunks)} chunks")

|

||||

|

||||

await self.chunks_vdb.upsert(inserting_chunks)

|

||||

|

||||

logger.info("[Entity Extraction]...")

|

||||

maybe_new_kg = await extract_entities(

|

||||

inserting_chunks,

|

||||

knwoledge_graph_inst=self.chunk_entity_relation_graph,

|

||||

entity_vdb=self.entities_vdb,

|

||||

relationships_vdb=self.relationships_vdb,

|

||||

global_config=asdict(self),

|

||||

)

|

||||

if maybe_new_kg is None:

|

||||

logger.warning("No new entities and relationships found")

|

||||

return

|

||||

self.chunk_entity_relation_graph = maybe_new_kg

|

||||

|

||||

await self.full_docs.upsert(new_docs)

|

||||

await self.text_chunks.upsert(inserting_chunks)

|

||||

finally:

|

||||

await self._insert_done()

|

||||

|

||||

async def _insert_done(self):

|

||||

tasks = []

|

||||

for storage_inst in [

|

||||

self.full_docs,

|

||||

self.text_chunks,

|

||||

self.llm_response_cache,

|

||||

self.entities_vdb,

|

||||

self.relationships_vdb,

|

||||

self.chunks_vdb,

|

||||

self.chunk_entity_relation_graph,

|

||||

]:

|

||||

if storage_inst is None:

|

||||

continue

|

||||

tasks.append(cast(StorageNameSpace, storage_inst).index_done_callback())

|

||||

await asyncio.gather(*tasks)

|

||||

|

||||

def query(self, query: str, param: QueryParam = QueryParam()):

|

||||

loop = always_get_an_event_loop()

|

||||

return loop.run_until_complete(self.aquery(query, param))

|

||||

|

||||

async def aquery(self, query: str, param: QueryParam = QueryParam()):

|

||||

if param.mode == "local":

|

||||

response = await local_query(

|

||||

query,

|

||||

self.chunk_entity_relation_graph,

|

||||

self.entities_vdb,

|

||||

self.relationships_vdb,

|

||||

self.text_chunks,

|

||||

param,

|

||||

asdict(self),

|

||||

)

|

||||

elif param.mode == "global":

|

||||

response = await global_query(

|

||||

query,

|

||||

self.chunk_entity_relation_graph,

|

||||

self.entities_vdb,

|

||||

self.relationships_vdb,

|

||||

self.text_chunks,

|

||||

param,

|

||||

asdict(self),

|

||||

)

|

||||

elif param.mode == "hybird":

|

||||

response = await hybird_query(

|

||||

query,

|

||||

self.chunk_entity_relation_graph,

|

||||

self.entities_vdb,

|

||||

self.relationships_vdb,

|

||||

self.text_chunks,

|

||||

param,

|

||||

asdict(self),

|

||||

)

|

||||

elif param.mode == "naive":

|

||||

response = await naive_query(

|

||||

query,

|

||||

self.chunks_vdb,

|

||||

self.text_chunks,

|

||||

param,

|

||||

asdict(self),

|

||||

)

|

||||

else:

|

||||

raise ValueError(f"Unknown mode {param.mode}")

|

||||

await self._query_done()

|

||||

return response

|

||||

|

||||

|

||||

async def _query_done(self):

|

||||

tasks = []

|

||||

for storage_inst in [self.llm_response_cache]:

|

||||

if storage_inst is None:

|

||||

continue

|

||||

tasks.append(cast(StorageNameSpace, storage_inst).index_done_callback())

|

||||

await asyncio.gather(*tasks)

|

||||

|

||||

|

||||

88

lightrag/llm.py

Normal file

88

lightrag/llm.py

Normal file

@@ -0,0 +1,88 @@

|

||||

import os

|

||||

import numpy as np

|

||||

from openai import AsyncOpenAI, APIConnectionError, RateLimitError, Timeout

|

||||

from tenacity import (

|

||||

retry,

|

||||

stop_after_attempt,

|

||||

wait_exponential,

|

||||

retry_if_exception_type,

|

||||

)

|

||||

|

||||

from .base import BaseKVStorage

|

||||

from .utils import compute_args_hash, wrap_embedding_func_with_attrs

|

||||

|

||||

@retry(

|

||||

stop=stop_after_attempt(3),

|

||||

wait=wait_exponential(multiplier=1, min=4, max=10),

|

||||

retry=retry_if_exception_type((RateLimitError, APIConnectionError, Timeout)),

|

||||

)

|

||||

async def openai_complete_if_cache(

|

||||

model, prompt, system_prompt=None, history_messages=[], **kwargs

|

||||

) -> str:

|

||||

openai_async_client = AsyncOpenAI()

|

||||

hashing_kv: BaseKVStorage = kwargs.pop("hashing_kv", None)

|

||||

messages = []

|

||||

if system_prompt:

|

||||

messages.append({"role": "system", "content": system_prompt})

|

||||

messages.extend(history_messages)

|

||||

messages.append({"role": "user", "content": prompt})

|

||||

if hashing_kv is not None:

|

||||

args_hash = compute_args_hash(model, messages)

|

||||

if_cache_return = await hashing_kv.get_by_id(args_hash)

|

||||

if if_cache_return is not None:

|

||||

return if_cache_return["return"]

|

||||

|

||||

response = await openai_async_client.chat.completions.create(

|

||||

model=model, messages=messages, **kwargs

|

||||

)

|

||||

|

||||

if hashing_kv is not None:

|

||||

await hashing_kv.upsert(

|

||||

{args_hash: {"return": response.choices[0].message.content, "model": model}}

|

||||

)

|

||||

return response.choices[0].message.content

|

||||

|

||||

async def gpt_4o_complete(

|

||||

prompt, system_prompt=None, history_messages=[], **kwargs

|

||||

) -> str:

|

||||

return await openai_complete_if_cache(

|

||||

"gpt-4o",

|

||||

prompt,

|

||||

system_prompt=system_prompt,

|

||||

history_messages=history_messages,

|

||||

**kwargs,

|

||||

)

|

||||

|

||||

|

||||

async def gpt_4o_mini_complete(

|

||||

prompt, system_prompt=None, history_messages=[], **kwargs

|

||||

) -> str:

|

||||

return await openai_complete_if_cache(

|

||||

"gpt-4o-mini",

|

||||

prompt,

|

||||

system_prompt=system_prompt,

|

||||

history_messages=history_messages,

|

||||

**kwargs,

|

||||

)

|

||||

|

||||

@wrap_embedding_func_with_attrs(embedding_dim=1536, max_token_size=8192)

|

||||

@retry(

|

||||

stop=stop_after_attempt(3),

|

||||

wait=wait_exponential(multiplier=1, min=4, max=10),

|

||||

retry=retry_if_exception_type((RateLimitError, APIConnectionError, Timeout)),

|

||||

)

|

||||

async def openai_embedding(texts: list[str]) -> np.ndarray:

|

||||

openai_async_client = AsyncOpenAI()

|

||||

response = await openai_async_client.embeddings.create(

|

||||

model="text-embedding-3-small", input=texts, encoding_format="float"

|

||||

)

|

||||

return np.array([dp.embedding for dp in response.data])

|

||||

|

||||

if __name__ == "__main__":

|

||||

import asyncio

|

||||

|

||||

async def main():

|

||||

result = await gpt_4o_mini_complete('How are you?')

|

||||

print(result)

|

||||

|

||||

asyncio.run(main())

|

||||

944

lightrag/operate.py

Normal file

944

lightrag/operate.py

Normal file

@@ -0,0 +1,944 @@

|

||||

import asyncio

|

||||

import json

|

||||

import re

|

||||

from typing import Union

|

||||

from collections import Counter, defaultdict

|

||||

|

||||

from .utils import (

|

||||

logger,

|

||||

clean_str,

|

||||

compute_mdhash_id,

|

||||

decode_tokens_by_tiktoken,

|

||||

encode_string_by_tiktoken,

|

||||

is_float_regex,

|

||||

list_of_list_to_csv,

|

||||

pack_user_ass_to_openai_messages,

|

||||

split_string_by_multi_markers,

|

||||

truncate_list_by_token_size,

|

||||

)

|

||||

from .base import (

|

||||

BaseGraphStorage,

|

||||

BaseKVStorage,

|

||||

BaseVectorStorage,

|

||||

TextChunkSchema,

|

||||

QueryParam,

|

||||

)

|

||||

from .prompt import GRAPH_FIELD_SEP, PROMPTS

|

||||

|

||||

def chunking_by_token_size(

|

||||

content: str, overlap_token_size=128, max_token_size=1024, tiktoken_model="gpt-4o"

|

||||

):

|

||||

tokens = encode_string_by_tiktoken(content, model_name=tiktoken_model)

|

||||

results = []

|

||||

for index, start in enumerate(

|

||||

range(0, len(tokens), max_token_size - overlap_token_size)

|

||||

):

|

||||

chunk_content = decode_tokens_by_tiktoken(

|

||||

tokens[start : start + max_token_size], model_name=tiktoken_model

|

||||

)

|

||||

results.append(

|

||||

{

|

||||

"tokens": min(max_token_size, len(tokens) - start),

|

||||

"content": chunk_content.strip(),

|

||||

"chunk_order_index": index,

|

||||

}

|

||||

)

|

||||

return results

|

||||

|

||||

async def _handle_entity_relation_summary(

|

||||

entity_or_relation_name: str,

|

||||

description: str,

|

||||

global_config: dict,

|

||||

) -> str:

|

||||

use_llm_func: callable = global_config["llm_model_func"]

|

||||

llm_max_tokens = global_config["llm_model_max_token_size"]

|

||||

tiktoken_model_name = global_config["tiktoken_model_name"]

|

||||

summary_max_tokens = global_config["entity_summary_to_max_tokens"]

|

||||

|

||||

tokens = encode_string_by_tiktoken(description, model_name=tiktoken_model_name)

|

||||

if len(tokens) < summary_max_tokens: # No need for summary

|

||||

return description

|

||||

prompt_template = PROMPTS["summarize_entity_descriptions"]

|

||||

use_description = decode_tokens_by_tiktoken(

|

||||

tokens[:llm_max_tokens], model_name=tiktoken_model_name

|

||||

)

|

||||

context_base = dict(

|

||||

entity_name=entity_or_relation_name,

|

||||

description_list=use_description.split(GRAPH_FIELD_SEP),

|

||||

)

|

||||

use_prompt = prompt_template.format(**context_base)

|

||||

logger.debug(f"Trigger summary: {entity_or_relation_name}")

|

||||

summary = await use_llm_func(use_prompt, max_tokens=summary_max_tokens)

|

||||

return summary

|

||||

|

||||

|

||||

async def _handle_single_entity_extraction(

|

||||

record_attributes: list[str],

|

||||

chunk_key: str,

|

||||

):

|

||||

if record_attributes[0] != '"entity"' or len(record_attributes) < 4:

|

||||

return None

|

||||

# add this record as a node in the G

|

||||

entity_name = clean_str(record_attributes[1].upper())

|

||||

if not entity_name.strip():

|

||||

return None

|

||||

entity_type = clean_str(record_attributes[2].upper())

|

||||

entity_description = clean_str(record_attributes[3])

|

||||

entity_source_id = chunk_key

|

||||

return dict(

|

||||

entity_name=entity_name,

|

||||

entity_type=entity_type,

|

||||

description=entity_description,

|

||||

source_id=entity_source_id,

|

||||

)

|

||||

|

||||

|

||||

async def _handle_single_relationship_extraction(

|

||||

record_attributes: list[str],

|

||||

chunk_key: str,

|

||||

):

|

||||

if record_attributes[0] != '"relationship"' or len(record_attributes) < 5:

|

||||

return None

|

||||

# add this record as edge

|

||||

source = clean_str(record_attributes[1].upper())

|

||||

target = clean_str(record_attributes[2].upper())

|

||||

edge_description = clean_str(record_attributes[3])

|

||||

|

||||

edge_keywords = clean_str(record_attributes[4])

|

||||

edge_source_id = chunk_key

|

||||

weight = (

|

||||

float(record_attributes[-1]) if is_float_regex(record_attributes[-1]) else 1.0

|

||||

)

|

||||

return dict(

|

||||

src_id=source,

|

||||

tgt_id=target,

|

||||

weight=weight,

|

||||

description=edge_description,

|

||||

keywords=edge_keywords,

|

||||

source_id=edge_source_id,

|

||||

)

|

||||

|

||||

|

||||

async def _merge_nodes_then_upsert(

|

||||

entity_name: str,

|

||||

nodes_data: list[dict],

|

||||

knwoledge_graph_inst: BaseGraphStorage,

|

||||

global_config: dict,

|

||||

):

|

||||

already_entitiy_types = []

|

||||

already_source_ids = []

|

||||

already_description = []

|

||||

|

||||

already_node = await knwoledge_graph_inst.get_node(entity_name)

|

||||

if already_node is not None:

|

||||

already_entitiy_types.append(already_node["entity_type"])

|

||||

already_source_ids.extend(

|

||||

split_string_by_multi_markers(already_node["source_id"], [GRAPH_FIELD_SEP])

|

||||

)

|

||||

already_description.append(already_node["description"])

|

||||

|

||||

entity_type = sorted(

|

||||

Counter(

|

||||

[dp["entity_type"] for dp in nodes_data] + already_entitiy_types

|

||||

).items(),

|

||||

key=lambda x: x[1],

|

||||

reverse=True,

|

||||

)[0][0]

|

||||

description = GRAPH_FIELD_SEP.join(

|

||||

sorted(set([dp["description"] for dp in nodes_data] + already_description))

|

||||

)

|

||||

source_id = GRAPH_FIELD_SEP.join(

|

||||

set([dp["source_id"] for dp in nodes_data] + already_source_ids)

|

||||

)

|

||||

description = await _handle_entity_relation_summary(

|

||||

entity_name, description, global_config

|

||||

)

|

||||

node_data = dict(

|

||||

entity_type=entity_type,

|

||||

description=description,

|

||||

source_id=source_id,

|

||||

)

|

||||

await knwoledge_graph_inst.upsert_node(

|

||||

entity_name,

|

||||

node_data=node_data,

|

||||

)

|

||||

node_data["entity_name"] = entity_name

|

||||

return node_data

|

||||

|

||||

|

||||

async def _merge_edges_then_upsert(

|

||||

src_id: str,

|

||||

tgt_id: str,

|

||||

edges_data: list[dict],

|

||||

knwoledge_graph_inst: BaseGraphStorage,

|

||||

global_config: dict,

|

||||

):

|

||||

already_weights = []

|

||||

already_source_ids = []

|

||||

already_description = []

|

||||

already_keywords = []

|

||||

|

||||

if await knwoledge_graph_inst.has_edge(src_id, tgt_id):

|

||||

already_edge = await knwoledge_graph_inst.get_edge(src_id, tgt_id)

|

||||

already_weights.append(already_edge["weight"])

|

||||

already_source_ids.extend(

|

||||

split_string_by_multi_markers(already_edge["source_id"], [GRAPH_FIELD_SEP])

|

||||

)

|

||||

already_description.append(already_edge["description"])

|

||||

already_keywords.extend(

|

||||

split_string_by_multi_markers(already_edge["keywords"], [GRAPH_FIELD_SEP])

|

||||

)

|

||||

|

||||

weight = sum([dp["weight"] for dp in edges_data] + already_weights)

|

||||

description = GRAPH_FIELD_SEP.join(

|

||||

sorted(set([dp["description"] for dp in edges_data] + already_description))

|

||||

)

|

||||

keywords = GRAPH_FIELD_SEP.join(

|

||||

sorted(set([dp["keywords"] for dp in edges_data] + already_keywords))

|

||||

)

|

||||

source_id = GRAPH_FIELD_SEP.join(

|

||||

set([dp["source_id"] for dp in edges_data] + already_source_ids)

|

||||

)

|

||||

for need_insert_id in [src_id, tgt_id]:

|

||||

if not (await knwoledge_graph_inst.has_node(need_insert_id)):

|

||||

await knwoledge_graph_inst.upsert_node(

|

||||

need_insert_id,

|

||||

node_data={

|

||||

"source_id": source_id,

|

||||

"description": description,

|

||||

"entity_type": '"UNKNOWN"',

|

||||

},

|

||||

)

|

||||

description = await _handle_entity_relation_summary(

|

||||

(src_id, tgt_id), description, global_config

|

||||

)

|

||||

await knwoledge_graph_inst.upsert_edge(

|

||||

src_id,

|

||||

tgt_id,

|

||||

edge_data=dict(

|

||||

weight=weight,

|

||||

description=description,

|

||||

keywords=keywords,

|

||||

source_id=source_id,

|

||||

),

|

||||

)

|

||||

|

||||

edge_data = dict(

|

||||

src_id=src_id,

|

||||

tgt_id=tgt_id,

|

||||

description=description,

|

||||

keywords=keywords,

|

||||

)

|

||||

|

||||

return edge_data

|

||||

|

||||

async def extract_entities(

|

||||

chunks: dict[str, TextChunkSchema],

|

||||

knwoledge_graph_inst: BaseGraphStorage,

|

||||

entity_vdb: BaseVectorStorage,

|

||||

relationships_vdb: BaseVectorStorage,

|

||||

global_config: dict,

|

||||

) -> Union[BaseGraphStorage, None]:

|

||||

use_llm_func: callable = global_config["llm_model_func"]

|

||||

entity_extract_max_gleaning = global_config["entity_extract_max_gleaning"]

|

||||

|

||||

ordered_chunks = list(chunks.items())

|

||||

|

||||

entity_extract_prompt = PROMPTS["entity_extraction"]

|

||||

context_base = dict(

|

||||

tuple_delimiter=PROMPTS["DEFAULT_TUPLE_DELIMITER"],

|

||||

record_delimiter=PROMPTS["DEFAULT_RECORD_DELIMITER"],

|

||||

completion_delimiter=PROMPTS["DEFAULT_COMPLETION_DELIMITER"],

|

||||

entity_types=",".join(PROMPTS["DEFAULT_ENTITY_TYPES"]),

|

||||

)

|

||||

continue_prompt = PROMPTS["entiti_continue_extraction"]

|

||||

if_loop_prompt = PROMPTS["entiti_if_loop_extraction"]

|

||||

|

||||

already_processed = 0

|

||||

already_entities = 0

|

||||

already_relations = 0

|

||||

|

||||

async def _process_single_content(chunk_key_dp: tuple[str, TextChunkSchema]):

|

||||

nonlocal already_processed, already_entities, already_relations

|

||||

chunk_key = chunk_key_dp[0]

|

||||

chunk_dp = chunk_key_dp[1]

|

||||

content = chunk_dp["content"]

|

||||

hint_prompt = entity_extract_prompt.format(**context_base, input_text=content)

|

||||

final_result = await use_llm_func(hint_prompt)

|

||||

|

||||

history = pack_user_ass_to_openai_messages(hint_prompt, final_result)

|

||||

for now_glean_index in range(entity_extract_max_gleaning):

|

||||

glean_result = await use_llm_func(continue_prompt, history_messages=history)

|

||||

|

||||

history += pack_user_ass_to_openai_messages(continue_prompt, glean_result)

|

||||

final_result += glean_result

|

||||

if now_glean_index == entity_extract_max_gleaning - 1:

|

||||

break

|

||||

|

||||

if_loop_result: str = await use_llm_func(

|

||||

if_loop_prompt, history_messages=history

|

||||

)

|

||||

if_loop_result = if_loop_result.strip().strip('"').strip("'").lower()

|

||||

if if_loop_result != "yes":

|

||||

break

|

||||

|

||||

records = split_string_by_multi_markers(

|

||||

final_result,

|

||||

[context_base["record_delimiter"], context_base["completion_delimiter"]],

|

||||

)

|

||||

|

||||

maybe_nodes = defaultdict(list)

|

||||

maybe_edges = defaultdict(list)

|

||||

for record in records:

|

||||

record = re.search(r"\((.*)\)", record)

|

||||

if record is None:

|

||||

continue

|

||||

record = record.group(1)

|

||||

record_attributes = split_string_by_multi_markers(

|

||||

record, [context_base["tuple_delimiter"]]

|

||||

)

|

||||

if_entities = await _handle_single_entity_extraction(

|

||||

record_attributes, chunk_key

|

||||

)

|

||||

if if_entities is not None:

|

||||

maybe_nodes[if_entities["entity_name"]].append(if_entities)

|

||||

continue

|

||||

|

||||

if_relation = await _handle_single_relationship_extraction(

|

||||

record_attributes, chunk_key

|

||||

)

|

||||

if if_relation is not None:

|

||||

maybe_edges[(if_relation["src_id"], if_relation["tgt_id"])].append(

|

||||

if_relation

|

||||

)

|

||||

already_processed += 1

|

||||

already_entities += len(maybe_nodes)

|

||||

already_relations += len(maybe_edges)

|

||||

now_ticks = PROMPTS["process_tickers"][

|

||||

already_processed % len(PROMPTS["process_tickers"])

|

||||

]

|

||||

print(

|

||||

f"{now_ticks} Processed {already_processed} chunks, {already_entities} entities(duplicated), {already_relations} relations(duplicated)\r",

|

||||

end="",

|

||||

flush=True,

|

||||

)

|

||||

return dict(maybe_nodes), dict(maybe_edges)

|

||||

|

||||

# use_llm_func is wrapped in ascynio.Semaphore, limiting max_async callings

|

||||

results = await asyncio.gather(

|

||||

*[_process_single_content(c) for c in ordered_chunks]

|

||||

)

|

||||

print() # clear the progress bar

|

||||

maybe_nodes = defaultdict(list)

|

||||

maybe_edges = defaultdict(list)

|

||||

for m_nodes, m_edges in results:

|

||||

for k, v in m_nodes.items():

|

||||

maybe_nodes[k].extend(v)

|

||||

for k, v in m_edges.items():

|

||||

maybe_edges[tuple(sorted(k))].extend(v)

|

||||

all_entities_data = await asyncio.gather(

|

||||

*[

|

||||

_merge_nodes_then_upsert(k, v, knwoledge_graph_inst, global_config)

|

||||

for k, v in maybe_nodes.items()

|

||||

]

|

||||

)

|

||||

all_relationships_data = await asyncio.gather(

|

||||

*[

|

||||

_merge_edges_then_upsert(k[0], k[1], v, knwoledge_graph_inst, global_config)

|

||||

for k, v in maybe_edges.items()

|

||||

]

|

||||

)

|

||||

if not len(all_entities_data):

|

||||

logger.warning("Didn't extract any entities, maybe your LLM is not working")

|

||||

return None

|

||||

if not len(all_relationships_data):

|

||||

logger.warning("Didn't extract any relationships, maybe your LLM is not working")

|

||||

return None

|

||||

|

||||

if entity_vdb is not None:

|

||||

data_for_vdb = {

|

||||

compute_mdhash_id(dp["entity_name"], prefix="ent-"): {

|

||||

"content": dp["entity_name"] + dp["description"],

|

||||

"entity_name": dp["entity_name"],

|

||||

}

|

||||

for dp in all_entities_data

|

||||

}

|

||||

await entity_vdb.upsert(data_for_vdb)

|

||||

|

||||

if relationships_vdb is not None:

|

||||

data_for_vdb = {

|

||||

compute_mdhash_id(dp["src_id"] + dp["tgt_id"], prefix="rel-"): {

|

||||

"src_id": dp["src_id"],

|

||||

"tgt_id": dp["tgt_id"],

|

||||

"content": dp["keywords"] + dp["src_id"] + dp["tgt_id"] + dp["description"],

|

||||

}

|

||||

for dp in all_relationships_data

|

||||

}

|

||||

await relationships_vdb.upsert(data_for_vdb)

|

||||

|

||||

return knwoledge_graph_inst

|

||||

|

||||

async def local_query(

|

||||

query,

|

||||

knowledge_graph_inst: BaseGraphStorage,

|

||||

entities_vdb: BaseVectorStorage,

|

||||

relationships_vdb: BaseVectorStorage,

|

||||

text_chunks_db: BaseKVStorage[TextChunkSchema],

|

||||

query_param: QueryParam,

|

||||

global_config: dict,

|

||||

) -> str:

|

||||

use_model_func = global_config["llm_model_func"]

|

||||

|

||||

kw_prompt_temp = PROMPTS["keywords_extraction"]

|

||||

kw_prompt = kw_prompt_temp.format(query=query)

|

||||

result = await use_model_func(kw_prompt)

|

||||

|

||||

try:

|

||||

keywords_data = json.loads(result)

|

||||

keywords = keywords_data.get("low_level_keywords", [])

|

||||

keywords = ', '.join(keywords)

|

||||

except json.JSONDecodeError as e:

|

||||

# Handle parsing error

|

||||

print(f"JSON parsing error: {e}")

|

||||

return PROMPTS["fail_response"]

|

||||

|

||||

context = await _build_local_query_context(

|

||||

keywords,

|

||||

knowledge_graph_inst,

|

||||

entities_vdb,

|

||||

text_chunks_db,

|

||||

query_param,

|

||||

)

|

||||

if query_param.only_need_context:

|

||||

return context

|

||||

if context is None:

|

||||

return PROMPTS["fail_response"]

|

||||

sys_prompt_temp = PROMPTS["rag_response"]

|

||||

sys_prompt = sys_prompt_temp.format(

|

||||

context_data=context, response_type=query_param.response_type

|

||||

)

|

||||

response = await use_model_func(

|

||||

query,

|

||||

system_prompt=sys_prompt,

|

||||

)

|

||||

return response

|

||||

|

||||

async def _build_local_query_context(

|

||||

query,

|

||||

knowledge_graph_inst: BaseGraphStorage,

|

||||

entities_vdb: BaseVectorStorage,

|

||||

text_chunks_db: BaseKVStorage[TextChunkSchema],

|

||||

query_param: QueryParam,

|

||||

):

|

||||

results = await entities_vdb.query(query, top_k=query_param.top_k)

|

||||

if not len(results):

|

||||

return None

|

||||

node_datas = await asyncio.gather(

|

||||

*[knowledge_graph_inst.get_node(r["entity_name"]) for r in results]

|

||||

)

|

||||

if not all([n is not None for n in node_datas]):

|

||||

logger.warning("Some nodes are missing, maybe the storage is damaged")

|

||||

node_degrees = await asyncio.gather(

|

||||

*[knowledge_graph_inst.node_degree(r["entity_name"]) for r in results]

|

||||

)

|

||||

node_datas = [

|

||||

{**n, "entity_name": k["entity_name"], "rank": d}

|

||||

for k, n, d in zip(results, node_datas, node_degrees)

|

||||

if n is not None

|

||||

]

|

||||

use_text_units = await _find_most_related_text_unit_from_entities(

|

||||

node_datas, query_param, text_chunks_db, knowledge_graph_inst

|

||||

)

|

||||

use_relations = await _find_most_related_edges_from_entities(

|

||||

node_datas, query_param, knowledge_graph_inst

|

||||

)

|

||||

logger.info(

|

||||

f"Local query uses {len(node_datas)} entites, {len(use_relations)} relations, {len(use_text_units)} text units"

|

||||

)

|

||||

entites_section_list = [["id", "entity", "type", "description", "rank"]]

|

||||

for i, n in enumerate(node_datas):

|

||||

entites_section_list.append(

|

||||

[

|

||||

i,

|

||||

n["entity_name"],

|

||||

n.get("entity_type", "UNKNOWN"),

|

||||

n.get("description", "UNKNOWN"),

|

||||

n["rank"],

|

||||

]

|

||||

)

|

||||

entities_context = list_of_list_to_csv(entites_section_list)

|

||||

|

||||

relations_section_list = [

|

||||

["id", "source", "target", "description", "keywords", "weight", "rank"]

|

||||

]

|

||||

for i, e in enumerate(use_relations):

|

||||

relations_section_list.append(

|

||||

[

|

||||

i,

|

||||

e["src_tgt"][0],

|

||||

e["src_tgt"][1],

|

||||

e["description"],

|

||||

e["keywords"],

|

||||

e["weight"],

|

||||

e["rank"],

|

||||

]

|

||||

)

|

||||

relations_context = list_of_list_to_csv(relations_section_list)

|

||||

|

||||

text_units_section_list = [["id", "content"]]

|

||||

for i, t in enumerate(use_text_units):

|

||||

text_units_section_list.append([i, t["content"]])

|

||||

text_units_context = list_of_list_to_csv(text_units_section_list)

|

||||

return f"""

|

||||

-----Entities-----

|

||||

```csv

|

||||

{entities_context}

|

||||

```

|

||||

-----Relationships-----

|

||||

```csv

|

||||

{relations_context}

|

||||

```

|

||||

-----Sources-----

|

||||

```csv

|

||||

{text_units_context}

|

||||

```

|

||||

"""

|

||||

|

||||

async def _find_most_related_text_unit_from_entities(

|

||||

node_datas: list[dict],

|

||||

query_param: QueryParam,

|

||||

text_chunks_db: BaseKVStorage[TextChunkSchema],

|

||||

knowledge_graph_inst: BaseGraphStorage,

|

||||

):

|

||||

text_units = [

|

||||

split_string_by_multi_markers(dp["source_id"], [GRAPH_FIELD_SEP])

|

||||

for dp in node_datas

|

||||

]

|

||||

edges = await asyncio.gather(

|

||||

*[knowledge_graph_inst.get_node_edges(dp["entity_name"]) for dp in node_datas]

|

||||

)

|

||||

all_one_hop_nodes = set()

|

||||

for this_edges in edges:

|

||||

if not this_edges:

|

||||

continue

|

||||

all_one_hop_nodes.update([e[1] for e in this_edges])

|

||||

all_one_hop_nodes = list(all_one_hop_nodes)

|

||||

all_one_hop_nodes_data = await asyncio.gather(

|

||||

*[knowledge_graph_inst.get_node(e) for e in all_one_hop_nodes]

|

||||

)

|

||||

all_one_hop_text_units_lookup = {

|

||||

k: set(split_string_by_multi_markers(v["source_id"], [GRAPH_FIELD_SEP]))

|

||||

for k, v in zip(all_one_hop_nodes, all_one_hop_nodes_data)

|

||||

if v is not None

|

||||

}

|

||||

all_text_units_lookup = {}

|

||||

for index, (this_text_units, this_edges) in enumerate(zip(text_units, edges)):

|

||||

for c_id in this_text_units:

|

||||

if c_id in all_text_units_lookup:

|

||||

continue

|

||||

relation_counts = 0

|

||||

for e in this_edges:

|

||||

if (

|

||||

e[1] in all_one_hop_text_units_lookup

|

||||

and c_id in all_one_hop_text_units_lookup[e[1]]

|

||||

):

|

||||

relation_counts += 1

|

||||

all_text_units_lookup[c_id] = {

|

||||

"data": await text_chunks_db.get_by_id(c_id),

|

||||

"order": index,

|

||||

"relation_counts": relation_counts,

|

||||

}

|

||||

if any([v is None for v in all_text_units_lookup.values()]):

|

||||

logger.warning("Text chunks are missing, maybe the storage is damaged")

|

||||

all_text_units = [

|

||||

{"id": k, **v} for k, v in all_text_units_lookup.items() if v is not None

|

||||

]

|

||||

all_text_units = sorted(

|

||||

all_text_units, key=lambda x: (x["order"], -x["relation_counts"])

|

||||

)

|

||||

all_text_units = truncate_list_by_token_size(

|

||||

all_text_units,

|

||||

key=lambda x: x["data"]["content"],

|

||||

max_token_size=query_param.max_token_for_text_unit,

|

||||

)

|

||||

all_text_units: list[TextChunkSchema] = [t["data"] for t in all_text_units]

|

||||

return all_text_units

|

||||

|

||||

async def _find_most_related_edges_from_entities(

|

||||

node_datas: list[dict],

|

||||

query_param: QueryParam,

|

||||

knowledge_graph_inst: BaseGraphStorage,

|

||||

):

|

||||

all_related_edges = await asyncio.gather(

|

||||

*[knowledge_graph_inst.get_node_edges(dp["entity_name"]) for dp in node_datas]

|

||||

)

|

||||

all_edges = set()

|

||||

for this_edges in all_related_edges:

|

||||

all_edges.update([tuple(sorted(e)) for e in this_edges])

|

||||

all_edges = list(all_edges)

|

||||

all_edges_pack = await asyncio.gather(

|

||||

*[knowledge_graph_inst.get_edge(e[0], e[1]) for e in all_edges]

|

||||

)

|

||||

all_edges_degree = await asyncio.gather(

|

||||

*[knowledge_graph_inst.edge_degree(e[0], e[1]) for e in all_edges]

|

||||

)

|

||||

all_edges_data = [

|

||||

{"src_tgt": k, "rank": d, **v}

|

||||

for k, v, d in zip(all_edges, all_edges_pack, all_edges_degree)

|

||||

if v is not None

|

||||

]

|

||||

all_edges_data = sorted(

|

||||

all_edges_data, key=lambda x: (x["rank"], x["weight"]), reverse=True

|

||||

)

|

||||

all_edges_data = truncate_list_by_token_size(

|

||||

all_edges_data,

|

||||

key=lambda x: x["description"],

|

||||

max_token_size=query_param.max_token_for_global_context,

|

||||

)

|

||||

return all_edges_data

|

||||

|

||||

async def global_query(

|

||||

query,

|

||||

knowledge_graph_inst: BaseGraphStorage,

|

||||

entities_vdb: BaseVectorStorage,

|

||||

relationships_vdb: BaseVectorStorage,

|

||||

text_chunks_db: BaseKVStorage[TextChunkSchema],

|

||||

query_param: QueryParam,

|

||||

global_config: dict,

|

||||

) -> str:

|

||||

use_model_func = global_config["llm_model_func"]

|

||||

|

||||

kw_prompt_temp = PROMPTS["keywords_extraction"]

|

||||

kw_prompt = kw_prompt_temp.format(query=query)

|

||||

result = await use_model_func(kw_prompt)

|

||||

|

||||

try:

|

||||

keywords_data = json.loads(result)

|

||||

keywords = keywords_data.get("high_level_keywords", [])

|

||||

keywords = ', '.join(keywords)

|

||||

except json.JSONDecodeError as e:

|

||||

# Handle parsing error

|

||||

print(f"JSON parsing error: {e}")

|

||||

return PROMPTS["fail_response"]

|

||||

|

||||

context = await _build_global_query_context(

|

||||

keywords,

|

||||

knowledge_graph_inst,

|

||||

entities_vdb,

|

||||

relationships_vdb,

|

||||

text_chunks_db,

|

||||

query_param,

|

||||

)

|

||||

|

||||

if query_param.only_need_context:

|

||||

return context

|

||||

if context is None:

|

||||

return PROMPTS["fail_response"]

|

||||

|

||||

sys_prompt_temp = PROMPTS["rag_response"]

|

||||

sys_prompt = sys_prompt_temp.format(

|

||||

context_data=context, response_type=query_param.response_type

|

||||

)

|

||||

response = await use_model_func(

|

||||

query,

|

||||

system_prompt=sys_prompt,

|

||||

)

|

||||

return response

|

||||

|

||||

async def _build_global_query_context(

|

||||

keywords,

|

||||

knowledge_graph_inst: BaseGraphStorage,

|

||||

entities_vdb: BaseVectorStorage,

|

||||

relationships_vdb: BaseVectorStorage,

|

||||

text_chunks_db: BaseKVStorage[TextChunkSchema],

|

||||

query_param: QueryParam,

|

||||

):

|

||||

results = await relationships_vdb.query(keywords, top_k=query_param.top_k)

|

||||

|

||||

if not len(results):

|

||||

return None

|

||||

|

||||

edge_datas = await asyncio.gather(

|

||||

*[knowledge_graph_inst.get_edge(r["src_id"], r["tgt_id"]) for r in results]

|

||||

)

|

||||

|

||||

if not all([n is not None for n in edge_datas]):

|

||||

logger.warning("Some edges are missing, maybe the storage is damaged")

|

||||

edge_degree = await asyncio.gather(

|

||||

*[knowledge_graph_inst.edge_degree(r["src_id"], r["tgt_id"]) for r in results]

|

||||

)

|

||||

edge_datas = [

|

||||

{"src_id": k["src_id"], "tgt_id": k["tgt_id"], "rank": d, **v}

|

||||

for k, v, d in zip(results, edge_datas, edge_degree)

|

||||

if v is not None

|

||||

]

|

||||

edge_datas = sorted(

|

||||

edge_datas, key=lambda x: (x["rank"], x["weight"]), reverse=True

|

||||

)

|

||||

edge_datas = truncate_list_by_token_size(

|

||||

edge_datas,

|

||||

key=lambda x: x["description"],

|

||||

max_token_size=query_param.max_token_for_global_context,

|

||||

)

|

||||

|

||||

use_entities = await _find_most_related_entities_from_relationships(

|

||||

edge_datas, query_param, knowledge_graph_inst

|

||||

)

|

||||

use_text_units = await _find_related_text_unit_from_relationships(

|

||||

edge_datas, query_param, text_chunks_db, knowledge_graph_inst

|

||||

)

|

||||

logger.info(

|

||||

f"Global query uses {len(use_entities)} entites, {len(edge_datas)} relations, {len(use_text_units)} text units"

|

||||

)

|

||||

relations_section_list = [

|

||||

["id", "source", "target", "description", "keywords", "weight", "rank"]

|

||||

]

|

||||

for i, e in enumerate(edge_datas):

|

||||

relations_section_list.append(

|

||||

[

|

||||

i,

|

||||

e["src_id"],

|

||||

e["tgt_id"],

|

||||

e["description"],

|

||||

e["keywords"],

|

||||

e["weight"],

|

||||

e["rank"],

|

||||

]

|

||||

)

|

||||

relations_context = list_of_list_to_csv(relations_section_list)

|

||||

|

||||

entites_section_list = [["id", "entity", "type", "description", "rank"]]

|

||||

for i, n in enumerate(use_entities):

|

||||

entites_section_list.append(

|

||||

[

|

||||

i,

|

||||

n["entity_name"],

|

||||

n.get("entity_type", "UNKNOWN"),

|

||||

n.get("description", "UNKNOWN"),

|

||||

n["rank"],

|

||||

]

|

||||

)

|

||||

entities_context = list_of_list_to_csv(entites_section_list)

|

||||

|

||||

text_units_section_list = [["id", "content"]]

|

||||

for i, t in enumerate(use_text_units):

|

||||

text_units_section_list.append([i, t["content"]])

|

||||

text_units_context = list_of_list_to_csv(text_units_section_list)

|

||||

|

||||

return f"""

|

||||

-----Entities-----

|

||||

```csv

|

||||

{entities_context}

|

||||

```

|

||||

-----Relationships-----

|

||||

```csv

|

||||

{relations_context}