Merge pull request #645 from ParisNeo/main

Major Refactoring: LLM Components, UI Updates, and Dependency Management

This commit is contained in:

2

.gitignore

vendored

2

.gitignore

vendored

@@ -22,3 +22,5 @@ venv/

|

||||

examples/input/

|

||||

examples/output/

|

||||

.DS_Store

|

||||

#Remove config.ini from repo

|

||||

*.ini

|

||||

|

||||

50

README.md

50

README.md

@@ -1,28 +1,38 @@

|

||||

<center><h2>🚀 LightRAG: Simple and Fast Retrieval-Augmented Generation</h2></center>

|

||||

|

||||

|

||||

|

||||

|

||||

<div align='center'>

|

||||

<p>

|

||||

<div align="center">

|

||||

<table border="0" width="100%">

|

||||

<tr>

|

||||

<td width="100" align="center">

|

||||

<img src="https://github.com/user-attachments/assets/cb5b8fc1-0859-4f7c-8ec3-63c8ec7aa54b" width="80" height="80" alt="lightrag">

|

||||

</td>

|

||||

<td>

|

||||

<div>

|

||||

<p>

|

||||

<a href='https://lightrag.github.io'><img src='https://img.shields.io/badge/Project-Page-Green'></a>

|

||||

<a href='https://youtu.be/oageL-1I0GE'><img src='https://badges.aleen42.com/src/youtube.svg'></a>

|

||||

<a href='https://arxiv.org/abs/2410.05779'><img src='https://img.shields.io/badge/arXiv-2410.05779-b31b1b'></a>

|

||||

<a href='https://learnopencv.com/lightrag'><img src='https://img.shields.io/badge/LearnOpenCV-blue'></a>

|

||||

</p>

|

||||

<p>

|

||||

<img src='https://img.shields.io/github/stars/hkuds/lightrag?color=green&style=social' />

|

||||

<p>

|

||||

<img src='https://img.shields.io/github/stars/hkuds/lightrag?color=green&style=social' />

|

||||

<img src="https://img.shields.io/badge/python-3.10-blue">

|

||||

<a href="https://pypi.org/project/lightrag-hku/"><img src="https://img.shields.io/pypi/v/lightrag-hku.svg"></a>

|

||||

<a href="https://pepy.tech/project/lightrag-hku"><img src="https://static.pepy.tech/badge/lightrag-hku/month"></a>

|

||||

</p>

|

||||

<p>

|

||||

<a href='https://discord.gg/yF2MmDJyGJ'><img src='https://discordapp.com/api/guilds/1296348098003734629/widget.png?style=shield'></a>

|

||||

<a href='https://discord.gg/yF2MmDJyGJ'><img src='https://discordapp.com/api/guilds/1296348098003734629/widget.png?style=shield'></a>

|

||||

<a href='https://github.com/HKUDS/LightRAG/issues/285'><img src='https://img.shields.io/badge/群聊-wechat-green'></a>

|

||||

</p>

|

||||

</div>

|

||||

</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

This repository hosts the code of LightRAG. The structure of this code is based on [nano-graphrag](https://github.com/gusye1234/nano-graphrag).

|

||||

|

||||

<div align="center">

|

||||

This repository hosts the code of LightRAG. The structure of this code is based on <a href="https://github.com/gusye1234/nano-graphrag">nano-graphrag</a>.

|

||||

|

||||

<img src="https://i-blog.csdnimg.cn/direct/b2aaf634151b4706892693ffb43d9093.png" width="800" alt="LightRAG Diagram">

|

||||

</div>

|

||||

</div>

|

||||

|

||||

## 🎉 News

|

||||

@@ -36,18 +46,14 @@ This repository hosts the code of LightRAG. The structure of this code is based

|

||||

- [x] [2024.11.09]🎯📢Introducing the [LightRAG Gui](https://lightrag-gui.streamlit.app), which allows you to insert, query, visualize, and download LightRAG knowledge.

|

||||

- [x] [2024.11.04]🎯📢You can now [use Neo4J for Storage](https://github.com/HKUDS/LightRAG?tab=readme-ov-file#using-neo4j-for-storage).

|

||||

- [x] [2024.10.29]🎯📢LightRAG now supports multiple file types, including PDF, DOC, PPT, and CSV via `textract`.

|

||||

- [x] [2024.10.20]🎯📢We’ve added a new feature to LightRAG: Graph Visualization.

|

||||

- [x] [2024.10.18]🎯📢We’ve added a link to a [LightRAG Introduction Video](https://youtu.be/oageL-1I0GE). Thanks to the author!

|

||||

- [x] [2024.10.20]🎯📢We've added a new feature to LightRAG: Graph Visualization.

|

||||

- [x] [2024.10.18]🎯📢We've added a link to a [LightRAG Introduction Video](https://youtu.be/oageL-1I0GE). Thanks to the author!

|

||||

- [x] [2024.10.17]🎯📢We have created a [Discord channel](https://discord.gg/yF2MmDJyGJ)! Welcome to join for sharing and discussions! 🎉🎉

|

||||

- [x] [2024.10.16]🎯📢LightRAG now supports [Ollama models](https://github.com/HKUDS/LightRAG?tab=readme-ov-file#quick-start)!

|

||||

- [x] [2024.10.15]🎯📢LightRAG now supports [Hugging Face models](https://github.com/HKUDS/LightRAG?tab=readme-ov-file#quick-start)!

|

||||

|

||||

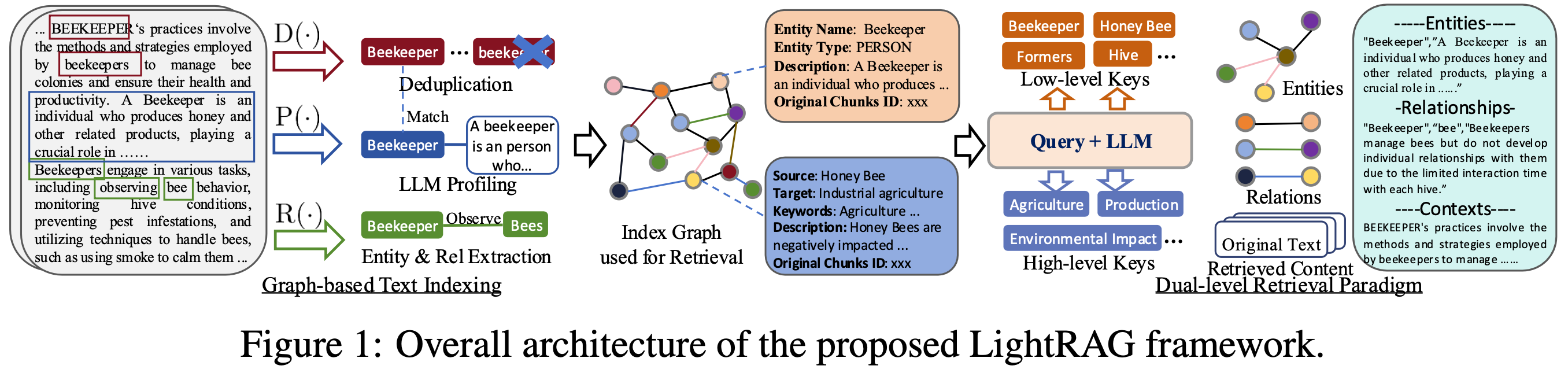

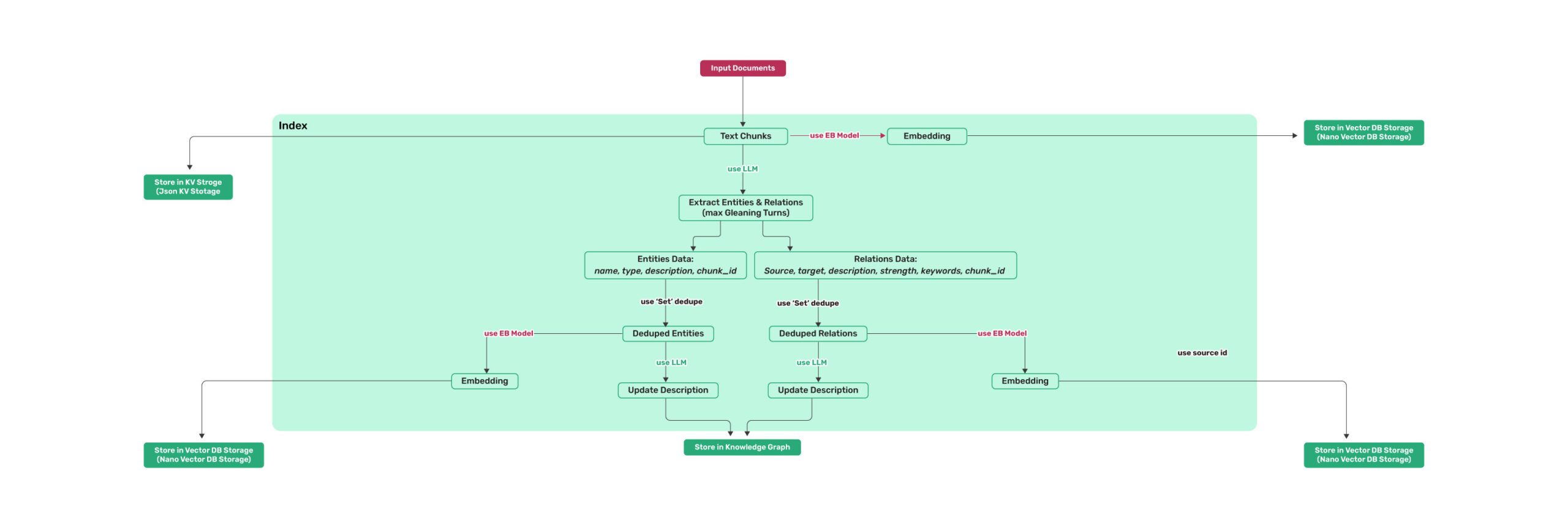

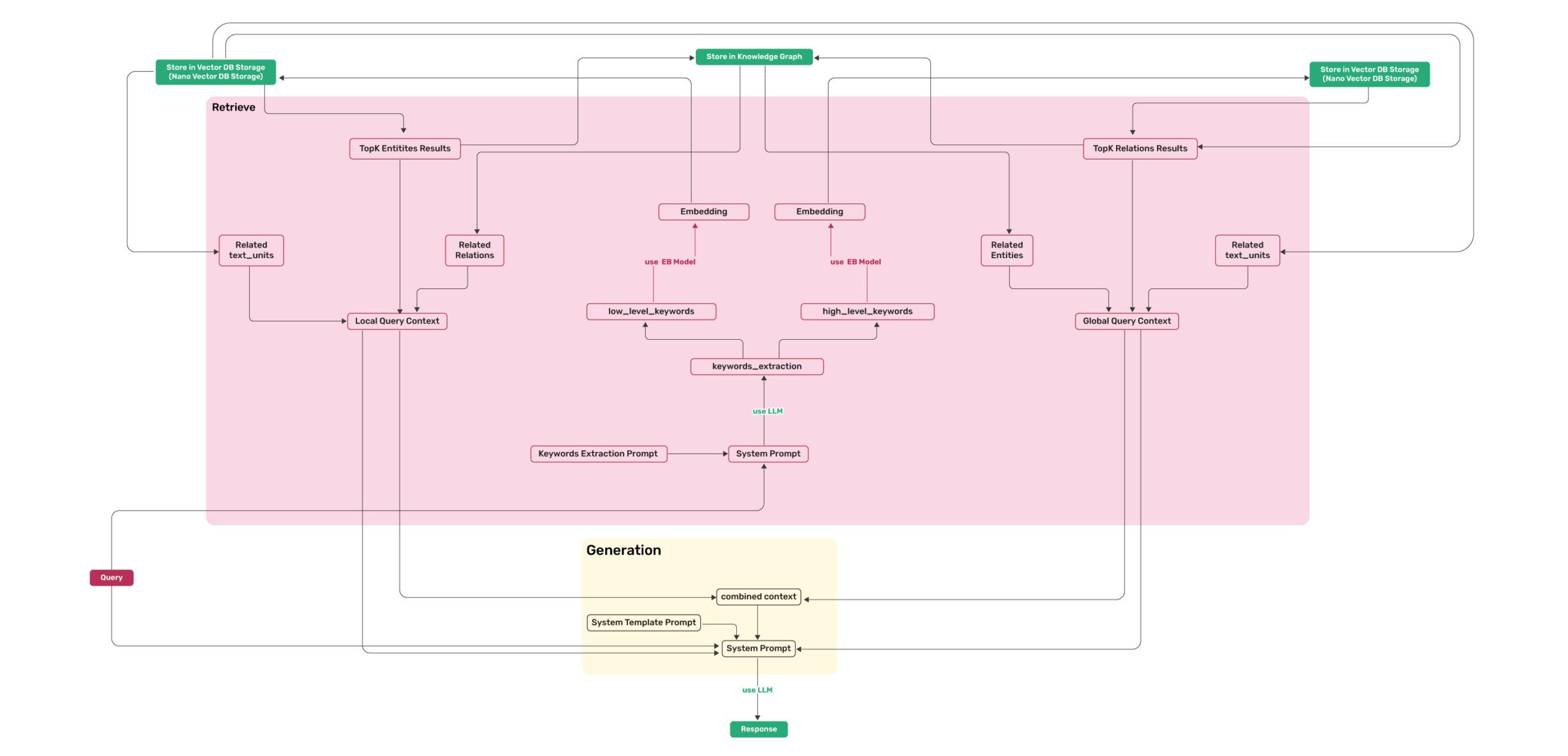

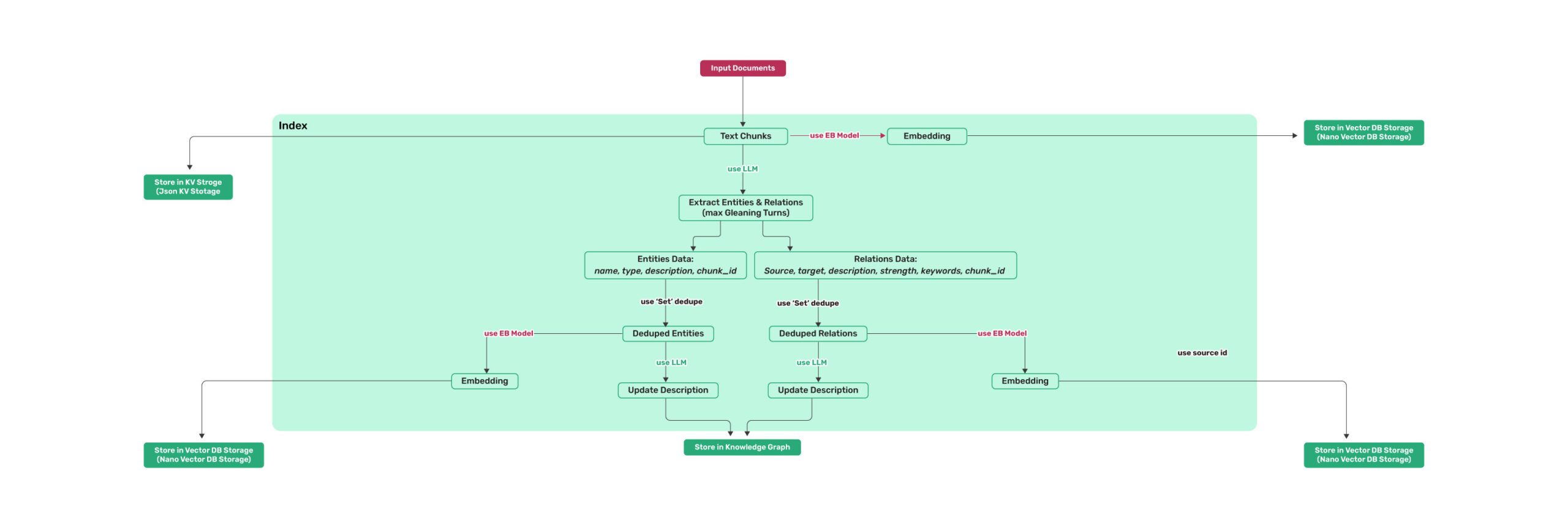

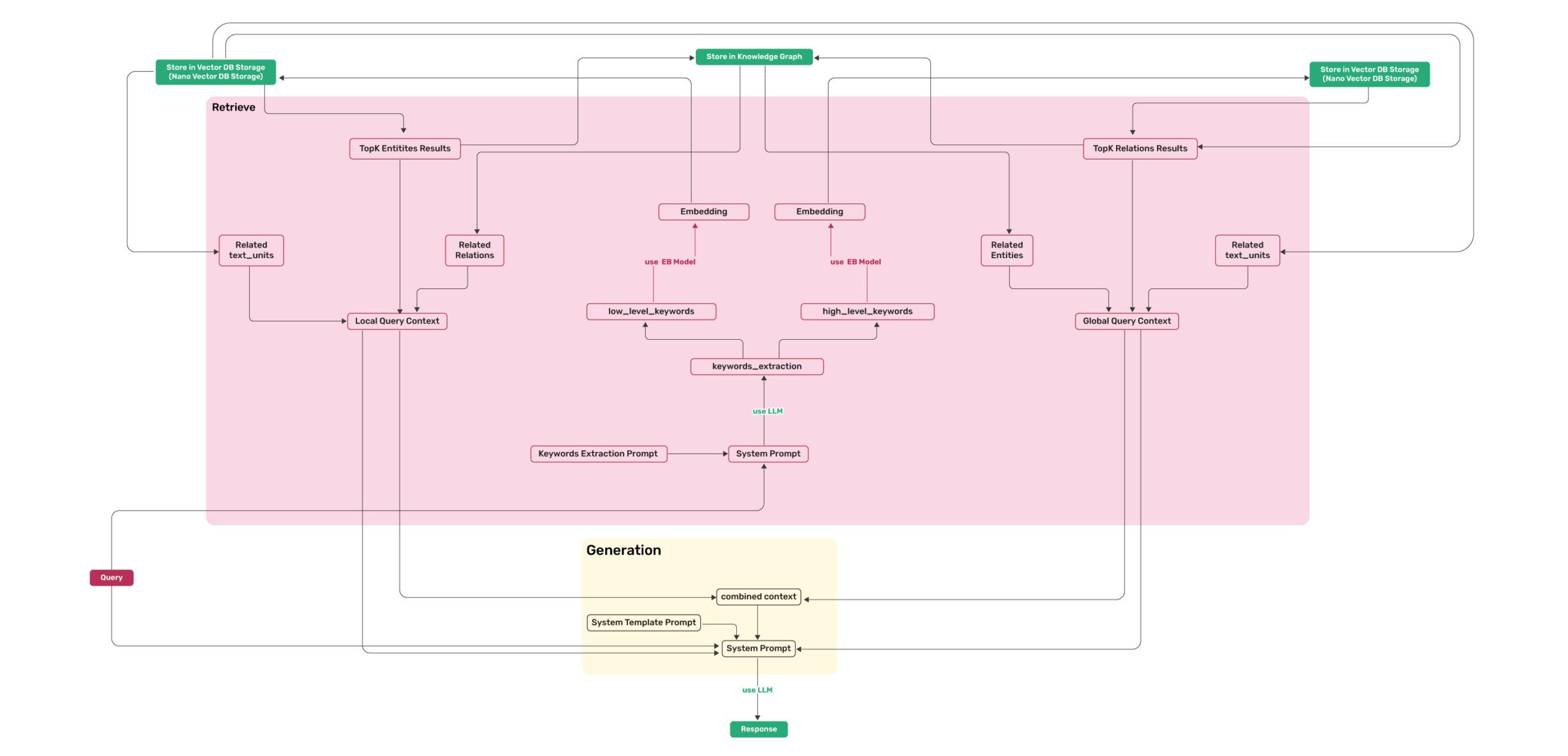

## Algorithm Flowchart

|

||||

|

||||

|

||||

*Figure 1: LightRAG Indexing Flowchart - Img Caption : [Source](https://learnopencv.com/lightrag/)*

|

||||

|

||||

*Figure 2: LightRAG Retrieval and Querying Flowchart - Img Caption : [Source](https://learnopencv.com/lightrag/)*

|

||||

|

||||

## Install

|

||||

|

||||

@@ -75,7 +81,7 @@ Use the below Python snippet (in a script) to initialize LightRAG and perform qu

|

||||

```python

|

||||

import os

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import gpt_4o_mini_complete, gpt_4o_complete

|

||||

from lightrag.llm.openai import gpt_4o_mini_complete, gpt_4o_complete

|

||||

|

||||

#########

|

||||

# Uncomment the below two lines if running in a jupyter notebook to handle the async nature of rag.insert()

|

||||

@@ -171,7 +177,7 @@ async def llm_model_func(

|

||||

)

|

||||

|

||||

async def embedding_func(texts: list[str]) -> np.ndarray:

|

||||

return await openai_embedding(

|

||||

return await openai_embed(

|

||||

texts,

|

||||

model="solar-embedding-1-large-query",

|

||||

api_key=os.getenv("UPSTAGE_API_KEY"),

|

||||

@@ -227,7 +233,7 @@ If you want to use Ollama models, you need to pull model you plan to use and emb

|

||||

Then you only need to set LightRAG as follows:

|

||||

|

||||

```python

|

||||

from lightrag.llm import ollama_model_complete, ollama_embedding

|

||||

from lightrag.llm.ollama import ollama_model_complete, ollama_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

|

||||

# Initialize LightRAG with Ollama model

|

||||

@@ -239,7 +245,7 @@ rag = LightRAG(

|

||||

embedding_func=EmbeddingFunc(

|

||||

embedding_dim=768,

|

||||

max_token_size=8192,

|

||||

func=lambda texts: ollama_embedding(

|

||||

func=lambda texts: ollama_embed(

|

||||

texts,

|

||||

embed_model="nomic-embed-text"

|

||||

)

|

||||

@@ -684,7 +690,7 @@ if __name__ == "__main__":

|

||||

| **entity\_summary\_to\_max\_tokens** | `int` | Maximum token size for each entity summary | `500` |

|

||||

| **node\_embedding\_algorithm** | `str` | Algorithm for node embedding (currently not used) | `node2vec` |

|

||||

| **node2vec\_params** | `dict` | Parameters for node embedding | `{"dimensions": 1536,"num_walks": 10,"walk_length": 40,"window_size": 2,"iterations": 3,"random_seed": 3,}` |

|

||||

| **embedding\_func** | `EmbeddingFunc` | Function to generate embedding vectors from text | `openai_embedding` |

|

||||

| **embedding\_func** | `EmbeddingFunc` | Function to generate embedding vectors from text | `openai_embed` |

|

||||

| **embedding\_batch\_num** | `int` | Maximum batch size for embedding processes (multiple texts sent per batch) | `32` |

|

||||

| **embedding\_func\_max\_async** | `int` | Maximum number of concurrent asynchronous embedding processes | `16` |

|

||||

| **llm\_model\_func** | `callable` | Function for LLM generation | `gpt_4o_mini_complete` |

|

||||

|

||||

13

config.ini

13

config.ini

@@ -1,13 +0,0 @@

|

||||

[redis]

|

||||

uri = redis://localhost:6379

|

||||

|

||||

[neo4j]

|

||||

uri = #

|

||||

username = neo4j

|

||||

password = 12345678

|

||||

|

||||

[milvus]

|

||||

uri = #

|

||||

user = root

|

||||

password = Milvus

|

||||

db_name = lightrag

|

||||

4

docs/Algorithm.md

Normal file

4

docs/Algorithm.md

Normal file

@@ -0,0 +1,4 @@

|

||||

|

||||

*Figure 1: LightRAG Indexing Flowchart - Img Caption : [Source](https://learnopencv.com/lightrag/)*

|

||||

|

||||

*Figure 2: LightRAG Retrieval and Querying Flowchart - Img Caption : [Source](https://learnopencv.com/lightrag/)*

|

||||

@@ -1,6 +1,6 @@

|

||||

import os

|

||||

from lightrag import LightRAG

|

||||

from lightrag.llm import gpt_4o_mini_complete

|

||||

from lightrag.llm.openai import gpt_4o_mini_complete

|

||||

#########

|

||||

# Uncomment the below two lines if running in a jupyter notebook to handle the async nature of rag.insert()

|

||||

# import nest_asyncio

|

||||

|

||||

@@ -2,7 +2,7 @@ from fastapi import FastAPI, HTTPException, File, UploadFile

|

||||

from pydantic import BaseModel

|

||||

import os

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import ollama_embedding, ollama_model_complete

|

||||

from lightrag.llm.ollama import ollama_embed, ollama_model_complete

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

from typing import Optional

|

||||

import asyncio

|

||||

@@ -38,7 +38,7 @@ rag = LightRAG(

|

||||

embedding_func=EmbeddingFunc(

|

||||

embedding_dim=768,

|

||||

max_token_size=8192,

|

||||

func=lambda texts: ollama_embedding(

|

||||

func=lambda texts: ollama_embed(

|

||||

texts, embed_model="nomic-embed-text", host="http://localhost:11434"

|

||||

),

|

||||

),

|

||||

|

||||

@@ -9,7 +9,7 @@ from typing import Optional

|

||||

import os

|

||||

import logging

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import ollama_model_complete, ollama_embed

|

||||

from lightrag.llm.ollama import ollama_model_complete, ollama_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

|

||||

import nest_asyncio

|

||||

|

||||

@@ -2,7 +2,7 @@ from fastapi import FastAPI, HTTPException, File, UploadFile

|

||||

from pydantic import BaseModel

|

||||

import os

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import openai_complete_if_cache, openai_embedding

|

||||

from lightrag.llm.openai import openai_complete_if_cache, openai_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

import numpy as np

|

||||

from typing import Optional

|

||||

@@ -48,7 +48,7 @@ async def llm_model_func(

|

||||

|

||||

|

||||

async def embedding_func(texts: list[str]) -> np.ndarray:

|

||||

return await openai_embedding(

|

||||

return await openai_embed(

|

||||

texts,

|

||||

model=EMBEDDING_MODEL,

|

||||

)

|

||||

|

||||

@@ -13,7 +13,7 @@ from pathlib import Path

|

||||

import asyncio

|

||||

import nest_asyncio

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import openai_complete_if_cache, openai_embedding

|

||||

from lightrag.llm.openai import openai_complete_if_cache, openai_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

import numpy as np

|

||||

|

||||

@@ -64,7 +64,7 @@ async def llm_model_func(

|

||||

|

||||

|

||||

async def embedding_func(texts: list[str]) -> np.ndarray:

|

||||

return await openai_embedding(

|

||||

return await openai_embed(

|

||||

texts,

|

||||

model=EMBEDDING_MODEL,

|

||||

api_key=APIKEY,

|

||||

|

||||

@@ -6,7 +6,7 @@ import os

|

||||

import logging

|

||||

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import bedrock_complete, bedrock_embedding

|

||||

from lightrag.llm.bedrock import bedrock_complete, bedrock_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

|

||||

logging.getLogger("aiobotocore").setLevel(logging.WARNING)

|

||||

@@ -20,7 +20,7 @@ rag = LightRAG(

|

||||

llm_model_func=bedrock_complete,

|

||||

llm_model_name="Anthropic Claude 3 Haiku // Amazon Bedrock",

|

||||

embedding_func=EmbeddingFunc(

|

||||

embedding_dim=1024, max_token_size=8192, func=bedrock_embedding

|

||||

embedding_dim=1024, max_token_size=8192, func=bedrock_embed

|

||||

),

|

||||

)

|

||||

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

import os

|

||||

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import hf_model_complete, hf_embedding

|

||||

from lightrag.llm.hf import hf_model_complete, hf_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

from transformers import AutoModel, AutoTokenizer

|

||||

|

||||

@@ -17,7 +17,7 @@ rag = LightRAG(

|

||||

embedding_func=EmbeddingFunc(

|

||||

embedding_dim=384,

|

||||

max_token_size=5000,

|

||||

func=lambda texts: hf_embedding(

|

||||

func=lambda texts: hf_embed(

|

||||

texts,

|

||||

tokenizer=AutoTokenizer.from_pretrained(

|

||||

"sentence-transformers/all-MiniLM-L6-v2"

|

||||

|

||||

@@ -1,13 +1,14 @@

|

||||

import numpy as np

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

from lightrag.llm import jina_embedding, openai_complete_if_cache

|

||||

from lightrag.llm.jina import jina_embed

|

||||

from lightrag.llm.openai import openai_complete_if_cache

|

||||

import os

|

||||

import asyncio

|

||||

|

||||

|

||||

async def embedding_func(texts: list[str]) -> np.ndarray:

|

||||

return await jina_embedding(texts, api_key="YourJinaAPIKey")

|

||||

return await jina_embed(texts, api_key="YourJinaAPIKey")

|

||||

|

||||

|

||||

WORKING_DIR = "./dickens"

|

||||

|

||||

@@ -1,7 +1,8 @@

|

||||

import os

|

||||

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import lmdeploy_model_if_cache, hf_embedding

|

||||

from lightrag.llm.lmdeploy import lmdeploy_model_if_cache

|

||||

from lightrag.llm.hf import hf_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

from transformers import AutoModel, AutoTokenizer

|

||||

|

||||

@@ -42,7 +43,7 @@ rag = LightRAG(

|

||||

embedding_func=EmbeddingFunc(

|

||||

embedding_dim=384,

|

||||

max_token_size=5000,

|

||||

func=lambda texts: hf_embedding(

|

||||

func=lambda texts: hf_embed(

|

||||

texts,

|

||||

tokenizer=AutoTokenizer.from_pretrained(

|

||||

"sentence-transformers/all-MiniLM-L6-v2"

|

||||

|

||||

@@ -3,7 +3,7 @@ import asyncio

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import (

|

||||

openai_complete_if_cache,

|

||||

nvidia_openai_embedding,

|

||||

nvidia_openai_embed,

|

||||

)

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

import numpy as np

|

||||

@@ -47,7 +47,7 @@ nvidia_embed_model = "nvidia/nv-embedqa-e5-v5"

|

||||

|

||||

|

||||

async def indexing_embedding_func(texts: list[str]) -> np.ndarray:

|

||||

return await nvidia_openai_embedding(

|

||||

return await nvidia_openai_embed(

|

||||

texts,

|

||||

model=nvidia_embed_model, # maximum 512 token

|

||||

# model="nvidia/llama-3.2-nv-embedqa-1b-v1",

|

||||

@@ -60,7 +60,7 @@ async def indexing_embedding_func(texts: list[str]) -> np.ndarray:

|

||||

|

||||

|

||||

async def query_embedding_func(texts: list[str]) -> np.ndarray:

|

||||

return await nvidia_openai_embedding(

|

||||

return await nvidia_openai_embed(

|

||||

texts,

|

||||

model=nvidia_embed_model, # maximum 512 token

|

||||

# model="nvidia/llama-3.2-nv-embedqa-1b-v1",

|

||||

|

||||

@@ -4,7 +4,7 @@ import logging

|

||||

import os

|

||||

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import ollama_embedding, ollama_model_complete

|

||||

from lightrag.llm.ollama import ollama_embed, ollama_model_complete

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

|

||||

WORKING_DIR = "./dickens_age"

|

||||

@@ -32,7 +32,7 @@ rag = LightRAG(

|

||||

embedding_func=EmbeddingFunc(

|

||||

embedding_dim=768,

|

||||

max_token_size=8192,

|

||||

func=lambda texts: ollama_embedding(

|

||||

func=lambda texts: ollama_embed(

|

||||

texts, embed_model="nomic-embed-text", host="http://localhost:11434"

|

||||

),

|

||||

),

|

||||

|

||||

@@ -3,7 +3,7 @@ import os

|

||||

import inspect

|

||||

import logging

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import ollama_model_complete, ollama_embedding

|

||||

from lightrag.llm.ollama import ollama_model_complete, ollama_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

|

||||

WORKING_DIR = "./dickens"

|

||||

@@ -23,7 +23,7 @@ rag = LightRAG(

|

||||

embedding_func=EmbeddingFunc(

|

||||

embedding_dim=768,

|

||||

max_token_size=8192,

|

||||

func=lambda texts: ollama_embedding(

|

||||

func=lambda texts: ollama_embed(

|

||||

texts, embed_model="nomic-embed-text", host="http://localhost:11434"

|

||||

),

|

||||

),

|

||||

|

||||

@@ -10,7 +10,7 @@ import os

|

||||

# logging.basicConfig(format="%(levelname)s:%(message)s", level=logging.WARN)

|

||||

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import ollama_embedding, ollama_model_complete

|

||||

from lightrag.llm.ollama import ollama_embed, ollama_model_complete

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

|

||||

WORKING_DIR = "./dickens_gremlin"

|

||||

@@ -41,7 +41,7 @@ rag = LightRAG(

|

||||

embedding_func=EmbeddingFunc(

|

||||

embedding_dim=768,

|

||||

max_token_size=8192,

|

||||

func=lambda texts: ollama_embedding(

|

||||

func=lambda texts: ollama_embed(

|

||||

texts, embed_model="nomic-embed-text", host="http://localhost:11434"

|

||||

),

|

||||

),

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

import os

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import ollama_model_complete, ollama_embed

|

||||

from lightrag.llm.ollama import ollama_model_complete, ollama_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

|

||||

# WorkingDir

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

import os

|

||||

import asyncio

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import openai_complete_if_cache, openai_embedding

|

||||

from lightrag.llm.openai import openai_complete_if_cache, openai_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

import numpy as np

|

||||

|

||||

@@ -26,7 +26,7 @@ async def llm_model_func(

|

||||

|

||||

|

||||

async def embedding_func(texts: list[str]) -> np.ndarray:

|

||||

return await openai_embedding(

|

||||

return await openai_embed(

|

||||

texts,

|

||||

model="solar-embedding-1-large-query",

|

||||

api_key=os.getenv("UPSTAGE_API_KEY"),

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

import os

|

||||

import asyncio

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import openai_complete_if_cache, openai_embedding

|

||||

from lightrag.llm.openai import openai_complete_if_cache, openai_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

import numpy as np

|

||||

|

||||

@@ -26,7 +26,7 @@ async def llm_model_func(

|

||||

|

||||

|

||||

async def embedding_func(texts: list[str]) -> np.ndarray:

|

||||

return await openai_embedding(

|

||||

return await openai_embed(

|

||||

texts,

|

||||

model="solar-embedding-1-large-query",

|

||||

api_key=os.getenv("UPSTAGE_API_KEY"),

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

import os

|

||||

import inspect

|

||||

from lightrag import LightRAG

|

||||

from lightrag.llm import openai_complete, openai_embedding

|

||||

from lightrag.llm import openai_complete, openai_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

from lightrag.lightrag import always_get_an_event_loop

|

||||

from lightrag import QueryParam

|

||||

@@ -24,7 +24,7 @@ rag = LightRAG(

|

||||

embedding_func=EmbeddingFunc(

|

||||

embedding_dim=1024,

|

||||

max_token_size=8192,

|

||||

func=lambda texts: openai_embedding(

|

||||

func=lambda texts: openai_embed(

|

||||

texts=texts,

|

||||

model="text-embedding-bge-m3",

|

||||

base_url="http://127.0.0.1:1234/v1",

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

import os

|

||||

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import gpt_4o_mini_complete

|

||||

from lightrag.llm.openai import gpt_4o_mini_complete

|

||||

|

||||

WORKING_DIR = "./dickens"

|

||||

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

import os

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import ollama_embed, openai_complete_if_cache

|

||||

from lightrag.llm.ollama import ollama_embed, openai_complete_if_cache

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

|

||||

# WorkingDir

|

||||

|

||||

@@ -3,7 +3,7 @@ import os

|

||||

from pathlib import Path

|

||||

import asyncio

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import openai_complete_if_cache, openai_embedding

|

||||

from lightrag.llm.openai import openai_complete_if_cache, openai_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

import numpy as np

|

||||

from lightrag.kg.oracle_impl import OracleDB

|

||||

@@ -42,7 +42,7 @@ async def llm_model_func(

|

||||

|

||||

|

||||

async def embedding_func(texts: list[str]) -> np.ndarray:

|

||||

return await openai_embedding(

|

||||

return await openai_embed(

|

||||

texts,

|

||||

model=EMBEDMODEL,

|

||||

api_key=APIKEY,

|

||||

|

||||

@@ -1,7 +1,8 @@

|

||||

import os

|

||||

import asyncio

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import openai_complete_if_cache, siliconcloud_embedding

|

||||

from lightrag.llm.openai import openai_complete_if_cache

|

||||

from lightrag.llm.siliconcloud import siliconcloud_embedding

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

import numpy as np

|

||||

|

||||

|

||||

@@ -3,7 +3,7 @@ import logging

|

||||

|

||||

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import zhipu_complete, zhipu_embedding

|

||||

from lightrag.llm.zhipu import zhipu_complete, zhipu_embedding

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

|

||||

WORKING_DIR = "./dickens"

|

||||

|

||||

@@ -6,7 +6,7 @@ from dotenv import load_dotenv

|

||||

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.kg.postgres_impl import PostgreSQLDB

|

||||

from lightrag.llm import ollama_embedding, zhipu_complete

|

||||

from lightrag.llm.zhipu import ollama_embedding, zhipu_complete

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

|

||||

load_dotenv()

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

import os

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import gpt_4o_mini_complete

|

||||

from lightrag.llm.openai import gpt_4o_mini_complete

|

||||

#########

|

||||

# Uncomment the below two lines if running in a jupyter notebook to handle the async nature of rag.insert()

|

||||

# import nest_asyncio

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

import os

|

||||

import asyncio

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import gpt_4o_mini_complete, openai_embedding

|

||||

from lightrag.llm.openai import gpt_4o_mini_complete, openai_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

import numpy as np

|

||||

|

||||

@@ -35,7 +35,7 @@ EMBEDDING_MAX_TOKEN_SIZE = int(os.environ.get("EMBEDDING_MAX_TOKEN_SIZE", 8192))

|

||||

|

||||

|

||||

async def embedding_func(texts: list[str]) -> np.ndarray:

|

||||

return await openai_embedding(

|

||||

return await openai_embed(

|

||||

texts,

|

||||

model=EMBEDDING_MODEL,

|

||||

)

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

import os

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import gpt_4o_mini_complete

|

||||

from lightrag.llm.openai import gpt_4o_mini_complete

|

||||

|

||||

|

||||

#########

|

||||

|

||||

@@ -16,7 +16,7 @@

|

||||

"import logging\n",

|

||||

"import numpy as np\n",

|

||||

"from lightrag import LightRAG, QueryParam\n",

|

||||

"from lightrag.llm import openai_complete_if_cache, openai_embedding\n",

|

||||

"from lightrag.llm.openai import openai_complete_if_cache, openai_embed\n",

|

||||

"from lightrag.utils import EmbeddingFunc\n",

|

||||

"import nest_asyncio"

|

||||

]

|

||||

@@ -74,7 +74,7 @@

|

||||

"\n",

|

||||

"\n",

|

||||

"async def embedding_func(texts: list[str]) -> np.ndarray:\n",

|

||||

" return await openai_embedding(\n",

|

||||

" return await openai_embed(\n",

|

||||

" texts,\n",

|

||||

" model=\"ep-20241231173413-pgjmk\",\n",

|

||||

" api_key=API,\n",

|

||||

@@ -138,7 +138,7 @@

|

||||

"\n",

|

||||

"\n",

|

||||

"async def embedding_func(texts: list[str]) -> np.ndarray:\n",

|

||||

" return await openai_embedding(\n",

|

||||

" return await openai_embed(\n",

|

||||

" texts,\n",

|

||||

" model=\"ep-20241231173413-pgjmk\",\n",

|

||||

" api_key=API,\n",

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

import os

|

||||

import time

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import ollama_model_complete, ollama_embedding

|

||||

from lightrag.llm.ollama import ollama_model_complete, ollama_embed

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

|

||||

# Working directory and the directory path for text files

|

||||

@@ -20,7 +20,7 @@ rag = LightRAG(

|

||||

embedding_func=EmbeddingFunc(

|

||||

embedding_dim=768,

|

||||

max_token_size=8192,

|

||||

func=lambda texts: ollama_embedding(texts, embed_model="nomic-embed-text"),

|

||||

func=lambda texts: ollama_embed(texts, embed_model="nomic-embed-text"),

|

||||

),

|

||||

)

|

||||

|

||||

|

||||

@@ -8,10 +8,6 @@ import time

|

||||

import re

|

||||

from typing import List, Dict, Any, Optional, Union

|

||||

from lightrag import LightRAG, QueryParam

|

||||

from lightrag.llm import lollms_model_complete, lollms_embed

|

||||

from lightrag.llm import ollama_model_complete, ollama_embed

|

||||

from lightrag.llm import openai_complete_if_cache, openai_embedding

|

||||

from lightrag.llm import azure_openai_complete_if_cache, azure_openai_embedding

|

||||

from lightrag.api import __api_version__

|

||||

|

||||

from lightrag.utils import EmbeddingFunc

|

||||

@@ -468,13 +464,12 @@ def parse_args() -> argparse.Namespace:

|

||||

help="Path to SSL private key file (required if --ssl is enabled)",

|

||||

)

|

||||

parser.add_argument(

|

||||

'--auto-scan-at-startup',

|

||||

action='store_true',

|

||||

"--auto-scan-at-startup",

|

||||

action="store_true",

|

||||

default=False,

|

||||

help='Enable automatic scanning when the program starts'

|

||||

help="Enable automatic scanning when the program starts",

|

||||

)

|

||||

|

||||

|

||||

args = parser.parse_args()

|

||||

|

||||

return args

|

||||

@@ -679,18 +674,21 @@ def create_app(args):

|

||||

async def lifespan(app: FastAPI):

|

||||

"""Lifespan context manager for startup and shutdown events"""

|

||||

# Startup logic

|

||||

try:

|

||||

new_files = doc_manager.scan_directory()

|

||||

for file_path in new_files:

|

||||

try:

|

||||

await index_file(file_path)

|

||||

except Exception as e:

|

||||

trace_exception(e)

|

||||

logging.error(f"Error indexing file {file_path}: {str(e)}")

|

||||

if args.auto_scan_at_startup:

|

||||

try:

|

||||

new_files = doc_manager.scan_directory()

|

||||

for file_path in new_files:

|

||||

try:

|

||||

await index_file(file_path)

|

||||

except Exception as e:

|

||||

trace_exception(e)

|

||||

logging.error(f"Error indexing file {file_path}: {str(e)}")

|

||||

|

||||

logging.info(f"Indexed {len(new_files)} documents from {args.input_dir}")

|

||||

except Exception as e:

|

||||

logging.error(f"Error during startup indexing: {str(e)}")

|

||||

ASCIIColors.info(

|

||||

f"Indexed {len(new_files)} documents from {args.input_dir}"

|

||||

)

|

||||

except Exception as e:

|

||||

logging.error(f"Error during startup indexing: {str(e)}")

|

||||

yield

|

||||

# Cleanup logic (if needed)

|

||||

pass

|

||||

@@ -721,6 +719,20 @@ def create_app(args):

|

||||

|

||||

# Create working directory if it doesn't exist

|

||||

Path(args.working_dir).mkdir(parents=True, exist_ok=True)

|

||||

if args.llm_binding_host == "lollms" or args.embedding_binding == "lollms":

|

||||

from lightrag.llm.lollms import lollms_model_complete, lollms_embed

|

||||

if args.llm_binding_host == "ollama" or args.embedding_binding == "ollama":

|

||||

from lightrag.llm.ollama import ollama_model_complete, ollama_embed

|

||||

if args.llm_binding_host == "openai" or args.embedding_binding == "openai":

|

||||

from lightrag.llm.openai import openai_complete_if_cache, openai_embed

|

||||

if (

|

||||

args.llm_binding_host == "azure_openai"

|

||||

or args.embedding_binding == "azure_openai"

|

||||

):

|

||||

from lightrag.llm.azure_openai import (

|

||||

azure_openai_complete_if_cache,

|

||||

azure_openai_embed,

|

||||

)

|

||||

|

||||

async def openai_alike_model_complete(

|

||||

prompt,

|

||||

@@ -774,13 +786,13 @@ def create_app(args):

|

||||

api_key=args.embedding_binding_api_key,

|

||||

)

|

||||

if args.embedding_binding == "ollama"

|

||||

else azure_openai_embedding(

|

||||

else azure_openai_embed(

|

||||

texts,

|

||||

model=args.embedding_model, # no host is used for openai,

|

||||

api_key=args.embedding_binding_api_key,

|

||||

)

|

||||

if args.embedding_binding == "azure_openai"

|

||||

else openai_embedding(

|

||||

else openai_embed(

|

||||

texts,

|

||||

model=args.embedding_model, # no host is used for openai,

|

||||

api_key=args.embedding_binding_api_key,

|

||||

@@ -907,42 +919,21 @@ def create_app(args):

|

||||

else:

|

||||

logging.warning(f"No content extracted from file: {file_path}")

|

||||

|

||||

@asynccontextmanager

|

||||

async def lifespan(app: FastAPI):

|

||||

"""Lifespan context manager for startup and shutdown events"""

|

||||

# Startup logic

|

||||

# Now only if this option is active, we can scan. This is better for big databases where there are hundreds of

|

||||

# files. Makes the startup faster

|

||||

if args.auto_scan_at_startup:

|

||||

ASCIIColors.info("Auto scan is active, rescanning the input directory.")

|

||||

try:

|

||||

new_files = doc_manager.scan_directory()

|

||||

for file_path in new_files:

|

||||

try:

|

||||

await index_file(file_path)

|

||||

except Exception as e:

|

||||

trace_exception(e)

|

||||

logging.error(f"Error indexing file {file_path}: {str(e)}")

|

||||

|

||||

logging.info(f"Indexed {len(new_files)} documents from {args.input_dir}")

|

||||

except Exception as e:

|

||||

logging.error(f"Error during startup indexing: {str(e)}")

|

||||

|

||||

@app.post("/documents/scan", dependencies=[Depends(optional_api_key)])

|

||||

async def scan_for_new_documents():

|

||||

"""

|

||||

Manually trigger scanning for new documents in the directory managed by `doc_manager`.

|

||||

|

||||

|

||||

This endpoint facilitates manual initiation of a document scan to identify and index new files.

|

||||

It processes all newly detected files, attempts indexing each file, logs any errors that occur,

|

||||

and returns a summary of the operation.

|

||||

|

||||

|

||||

Returns:

|

||||

dict: A dictionary containing:

|

||||

- "status" (str): Indicates success or failure of the scanning process.

|

||||

- "indexed_count" (int): The number of successfully indexed documents.

|

||||

- "total_documents" (int): Total number of documents that have been indexed so far.

|

||||

|

||||

|

||||

Raises:

|

||||

HTTPException: If an error occurs during the document scanning process, a 500 status

|

||||

code is returned with details about the exception.

|

||||

@@ -970,25 +961,25 @@ def create_app(args):

|

||||

async def upload_to_input_dir(file: UploadFile = File(...)):

|

||||

"""

|

||||

Endpoint for uploading a file to the input directory and indexing it.

|

||||

|

||||

This API endpoint accepts a file through an HTTP POST request, checks if the

|

||||

|

||||

This API endpoint accepts a file through an HTTP POST request, checks if the

|

||||

uploaded file is of a supported type, saves it in the specified input directory,

|

||||

indexes it for retrieval, and returns a success status with relevant details.

|

||||

|

||||

|

||||

Parameters:

|

||||

file (UploadFile): The file to be uploaded. It must have an allowed extension as per

|

||||

`doc_manager.supported_extensions`.

|

||||

|

||||

|

||||

Returns:

|

||||

dict: A dictionary containing the upload status ("success"),

|

||||

a message detailing the operation result, and

|

||||

dict: A dictionary containing the upload status ("success"),

|

||||

a message detailing the operation result, and

|

||||

the total number of indexed documents.

|

||||

|

||||

|

||||

Raises:

|

||||

HTTPException: If the file type is not supported, it raises a 400 Bad Request error.

|

||||

If any other exception occurs during the file handling or indexing,

|

||||

it raises a 500 Internal Server Error with details about the exception.

|

||||

"""

|

||||

"""

|

||||

try:

|

||||

if not doc_manager.is_supported_file(file.filename):

|

||||

raise HTTPException(

|

||||

@@ -1017,23 +1008,23 @@ def create_app(args):

|

||||

async def query_text(request: QueryRequest):

|

||||

"""

|

||||

Handle a POST request at the /query endpoint to process user queries using RAG capabilities.

|

||||

|

||||

|

||||

Parameters:

|

||||

request (QueryRequest): A Pydantic model containing the following fields:

|

||||

- query (str): The text of the user's query.

|

||||

- mode (ModeEnum): Optional. Specifies the mode of retrieval augmentation.

|

||||

- stream (bool): Optional. Determines if the response should be streamed.

|

||||

- only_need_context (bool): Optional. If true, returns only the context without further processing.

|

||||

|

||||

|

||||

Returns:

|

||||

QueryResponse: A Pydantic model containing the result of the query processing.

|

||||

QueryResponse: A Pydantic model containing the result of the query processing.

|

||||

If a string is returned (e.g., cache hit), it's directly returned.

|

||||

Otherwise, an async generator may be used to build the response.

|

||||

|

||||

|

||||

Raises:

|

||||

HTTPException: Raised when an error occurs during the request handling process,

|

||||

with status code 500 and detail containing the exception message.

|

||||

"""

|

||||

"""

|

||||

try:

|

||||

response = await rag.aquery(

|

||||

request.query,

|

||||

@@ -1074,7 +1065,7 @@ def create_app(args):

|

||||

|

||||

Returns:

|

||||

StreamingResponse: A streaming response containing the RAG query results.

|

||||

"""

|

||||

"""

|

||||

try:

|

||||

response = await rag.aquery( # Use aquery instead of query, and add await

|

||||

request.query,

|

||||

@@ -1134,7 +1125,7 @@ def create_app(args):

|

||||

|

||||

Returns:

|

||||

InsertResponse: A response object containing the status of the operation, a message, and the number of documents inserted.

|

||||

"""

|

||||

"""

|

||||

try:

|

||||

await rag.ainsert(request.text)

|

||||

return InsertResponse(

|

||||

@@ -1759,7 +1750,7 @@ def create_app(args):

|

||||

"status": "healthy",

|

||||

"working_directory": str(args.working_dir),

|

||||

"input_directory": str(args.input_dir),

|

||||

"indexed_files": len(doc_manager.indexed_files),

|

||||

"indexed_files": doc_manager.indexed_files,

|

||||

"configuration": {

|

||||

# LLM configuration binding/host address (if applicable)/model (if applicable)

|

||||

"llm_binding": args.llm_binding,

|

||||

@@ -1772,7 +1763,7 @@ def create_app(args):

|

||||

"max_tokens": args.max_tokens,

|

||||

},

|

||||

}

|

||||

|

||||

|

||||

# Serve the static files

|

||||

static_dir = Path(__file__).parent / "static"

|

||||

static_dir.mkdir(exist_ok=True)

|

||||

@@ -1780,13 +1771,13 @@ def create_app(args):

|

||||

|

||||

return app

|

||||

|

||||

|

||||

|

||||

def main():

|

||||

args = parse_args()

|

||||

import uvicorn

|

||||

|

||||

app = create_app(args)

|

||||

display_splash_screen(args)

|

||||

display_splash_screen(args)

|

||||

uvicorn_config = {

|

||||

"app": app,

|

||||

"host": args.host,

|

||||

|

||||

@@ -1,4 +1,3 @@

|

||||

aioboto3

|

||||

ascii_colors

|

||||

fastapi

|

||||

nano_vectordb

|

||||

|

||||

@@ -11,7 +11,7 @@

|

||||

<script src="https://cdnjs.cloudflare.com/ajax/libs/prism/1.24.1/prism.min.js"></script>

|

||||

<script src="https://cdnjs.cloudflare.com/ajax/libs/prism/1.24.1/components/prism-python.min.js"></script>

|

||||

<script src="https://cdnjs.cloudflare.com/ajax/libs/prism/1.24.1/components/prism-javascript.min.js"></script>

|

||||

<script src="https://cdnjs.cloudflare.com/ajax/libs/prism/1.24.1/components/prism-css.min.js"></script>

|

||||

<script src="https://cdnjs.cloudflare.com/ajax/libs/prism/1.24.1/components/prism-css.min.js"></script>

|

||||

<style>

|

||||

body {

|

||||

font-family: 'Inter', sans-serif;

|

||||

@@ -37,12 +37,12 @@

|

||||

overflow-x: auto;

|

||||

margin: 1rem 0;

|

||||

}

|

||||

|

||||

|

||||

code {

|

||||

font-family: 'Fira Code', monospace;

|

||||

font-size: 0.9em;

|

||||

}

|

||||

|

||||

|

||||

/* Inline code styling */

|

||||

:not(pre) > code {

|

||||

background: #f4f4f4;

|

||||

@@ -50,7 +50,7 @@

|

||||

border-radius: 0.3em;

|

||||

font-size: 0.9em;

|

||||

}

|

||||

|

||||

|

||||

/* Prose modifications for better markdown rendering */

|

||||

.prose pre {

|

||||

background: #f4f4f4;

|

||||

@@ -58,7 +58,7 @@

|

||||

border-radius: 0.5rem;

|

||||

margin: 1rem 0;

|

||||

}

|

||||

|

||||

|

||||

.prose code {

|

||||

color: #374151;

|

||||

background: #f4f4f4;

|

||||

@@ -66,7 +66,7 @@

|

||||

border-radius: 0.3em;

|

||||

font-size: 0.9em;

|

||||

}

|

||||

|

||||

|

||||

.prose {

|

||||

max-width: none;

|

||||

}

|

||||

@@ -82,14 +82,14 @@

|

||||

<!-- Health Check Button -->

|

||||

<button id="healthCheckBtn" class="p-2 text-slate-600 hover:text-slate-800 transition-colors rounded-lg hover:bg-slate-100">

|

||||

<svg class="w-6 h-6" fill="none" stroke="currentColor" viewBox="0 0 24 24">

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

d="M9 12l2 2 4-4m6 2a9 9 0 11-18 0 9 9 0 0118 0z" />

|

||||

</svg>

|

||||

</button>

|

||||

<!-- Settings Button -->

|

||||

<button id="settingsBtn" class="p-2 text-slate-600 hover:text-slate-800 transition-colors rounded-lg hover:bg-slate-100">

|

||||

<svg class="w-6 h-6" fill="none" stroke="currentColor" viewBox="0 0 24 24">

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

d="M10.325 4.317c.426-1.756 2.924-1.756 3.35 0a1.724 1.724 0 002.573 1.066c1.543-.94 3.31.826 2.37 2.37a1.724 1.724 0 001.065 2.572c1.756.426 1.756 2.924 0 3.35a1.724 1.724 0 00-1.066 2.573c.94 1.543-.826 3.31-2.37 2.37a1.724 1.724 0 00-2.572 1.065c-.426 1.756-2.924 1.756-3.35 0a1.724 1.724 0 00-2.573-1.066c-1.543.94-3.31-.826-2.37-2.37a1.724 1.724 0 00-1.065-2.572c-1.756-.426-1.756-2.924 0-3.35a1.724 1.724 0 001.066-2.573c-.94-1.543.826-3.31 2.37-2.37.996.608 2.296.07 2.572-1.065z"/>

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

d="M12 15a3 3 0 100-6 3 3 0 000 6z" />

|

||||

@@ -107,19 +107,19 @@

|

||||

<section class="mb-8">

|

||||

<div class="bg-slate-50 p-6 rounded-lg">

|

||||

<h2 class="text-xl font-semibold text-slate-800 mb-4">Upload Documents</h2>

|

||||

|

||||

|

||||

<!-- Upload Form -->

|

||||

<form id="uploadForm" class="space-y-4">

|

||||

<!-- Drop Zone -->

|

||||

<div class="drop-zone rounded-lg p-8 text-center cursor-pointer">

|

||||

<input type="file" id="fileInput" class="hidden" multiple accept=".pdf,.txt,.doc,.docx">

|

||||

<input type="file" id="fileInput" class="hidden" multiple accept=".pdf,.md,.txt,.doc,.docx">

|

||||

<div class="flex flex-col items-center">

|

||||

<svg class="w-12 h-12 text-slate-400 mb-4" fill="none" stroke="currentColor" viewBox="0 0 24 24">

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

d="M7 16a4 4 0 01-.88-7.903A5 5 0 1115.9 6L16 6a5 5 0 011 9.9M15 13l-3-3m0 0l-3 3m3-3v12" />

|

||||

</svg>

|

||||

<p class="text-slate-700 font-medium">Drop files here or click to upload</p>

|

||||

<p class="text-slate-500 text-sm mt-1">PDF, TXT, DOC, DOCX (Max 10MB)</p>

|

||||

<p class="text-slate-500 text-sm mt-1">PDF, MD, TXT, DOC, DOCX (Max 10MB)</p>

|

||||

</div>

|

||||

</div>

|

||||

|

||||

@@ -135,9 +135,9 @@

|

||||

</div>

|

||||

|

||||

<!-- Upload Button -->

|

||||

<button type="submit"

|

||||

class="w-full bg-blue-600 text-white px-6 py-3 rounded-lg hover:bg-blue-700

|

||||

transition-colors font-medium focus:outline-none focus:ring-2

|

||||

<button type="submit"

|

||||

class="w-full bg-blue-600 text-white px-6 py-3 rounded-lg hover:bg-blue-700

|

||||

transition-colors font-medium focus:outline-none focus:ring-2

|

||||

focus:ring-blue-500 focus:ring-offset-2">

|

||||

Upload Documents

|

||||

</button>

|

||||

@@ -149,19 +149,19 @@

|

||||

<section>

|

||||

<div class="bg-slate-50 p-6 rounded-lg">

|

||||

<h2 class="text-xl font-semibold text-slate-800 mb-4">Query Documents</h2>

|

||||

|

||||

|

||||

<form id="queryForm" class="space-y-4">

|

||||

<textarea id="queryInput"

|

||||

class="w-full p-4 border border-slate-300 rounded-lg focus:ring-2

|

||||

class="w-full p-4 border border-slate-300 rounded-lg focus:ring-2

|

||||

focus:ring-blue-500 focus:border-blue-500 transition-all

|

||||

min-h-[120px] resize-y"

|

||||

placeholder="Enter your query here..."

|

||||

></textarea>

|

||||

|

||||

<button type="submit"

|

||||

class="w-full bg-green-600 text-white px-6 py-3 rounded-lg

|

||||

class="w-full bg-green-600 text-white px-6 py-3 rounded-lg

|

||||

hover:bg-green-700 transition-colors font-medium

|

||||

focus:outline-none focus:ring-2 focus:ring-green-500

|

||||

focus:outline-none focus:ring-2 focus:ring-green-500

|

||||

focus:ring-offset-2">

|

||||

Submit Query

|

||||

</button>

|

||||

@@ -178,11 +178,11 @@

|

||||

<div id="settingsModal" class="hidden fixed inset-0 bg-slate-900/50 backdrop-blur-sm flex items-center justify-center">

|

||||

<div class="bg-white rounded-xl shadow-lg p-6 w-full max-w-md m-4">

|

||||

<h3 class="text-lg font-semibold text-slate-900 mb-4">Settings</h3>

|

||||

<input type="text" id="apiKeyInput"

|

||||

class="w-full p-2 border rounded focus:ring-2 focus:ring-blue-500"

|

||||

<input type="text" id="apiKeyInput"

|

||||

class="w-full p-2 border rounded focus:ring-2 focus:ring-blue-500"

|

||||

placeholder="Enter API Key">

|

||||

<div class="mt-6 flex justify-end space-x-4">

|

||||

<button id="closeSettingsBtn"

|

||||

<button id="closeSettingsBtn"

|

||||

class="px-4 py-2 text-slate-700 hover:text-slate-900">

|

||||

Cancel

|

||||

</button>

|

||||

@@ -239,7 +239,7 @@

|

||||

<div class="flex items-center justify-between p-3 bg-white rounded-lg border">

|

||||

<div class="flex items-center">

|

||||

<svg class="w-5 h-5 text-slate-400 mr-2" fill="none" stroke="currentColor" viewBox="0 0 24 24">

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

d="M7 21h10a2 2 0 002-2V9.414a1 1 0 00-.293-.707l-5.414-5.414A1 1 0 0012.586 3H7a2 2 0 00-2 2v14a2 2 0 002 2z" />

|

||||

</svg>

|

||||

<span class="text-sm text-slate-600">${file.name}</span>

|

||||

@@ -247,8 +247,8 @@

|

||||

</div>

|

||||

<button type="button" data-index="${index}" class="text-red-500 hover:text-red-700 p-1">

|

||||

<svg class="w-5 h-5" fill="currentColor" viewBox="0 0 20 20">

|

||||

<path fill-rule="evenodd"

|

||||

d="M4.293 4.293a1 1 0 011.414 0L10 8.586l4.293-4.293a1 1 0 111.414 1.414L11.414 10l4.293 4.293a1 1 0 01-1.414 1.414L10 11.414l-4.293 4.293a1 1 0 01-1.414-1.414L8.586 10 4.293 5.707a1 1 0 010-1.414z"

|

||||

<path fill-rule="evenodd"

|

||||

d="M4.293 4.293a1 1 0 011.414 0L10 8.586l4.293-4.293a1 1 0 111.414 1.414L11.414 10l4.293 4.293a1 1 0 01-1.414 1.414L10 11.414l-4.293 4.293a1 1 0 01-1.414-1.414L8.586 10 4.293 5.707a1 1 0 010-1.414z"

|

||||

clip-rule="evenodd" />

|

||||

</svg>

|

||||

</button>

|

||||

@@ -290,7 +290,7 @@

|

||||

dropZone.addEventListener('click', () => {

|

||||

fileInput.click();

|

||||

});

|

||||

|

||||

|

||||

['dragenter', 'dragover', 'dragleave', 'drop'].forEach(eventName => {

|

||||

dropZone.addEventListener(eventName, (e) => {

|

||||

e.preventDefault();

|

||||

@@ -319,25 +319,25 @@

|

||||

uploadForm.addEventListener('submit', async (e) => {

|

||||

e.preventDefault();

|

||||

const files = fileInput.files;

|

||||

|

||||

|

||||

if (files.length === 0) {

|

||||

uploadStatus.innerHTML = '<span class="text-red-500">Please select files to upload</span>';

|

||||

return;

|

||||

}

|

||||

|

||||

|

||||

uploadProgress.classList.remove('hidden');

|

||||

const progressBar = uploadProgress.querySelector('.bg-blue-600');

|

||||

uploadStatus.textContent = 'Starting upload...';

|

||||

|

||||

|

||||

try {

|

||||

for (let i = 0; i < files.length; i++) {

|

||||

const file = files[i];

|

||||

const formData = new FormData();

|

||||

formData.append('file', file);

|

||||

|

||||

|

||||

uploadStatus.textContent = `Uploading ${file.name} (${i + 1}/${files.length})...`;

|

||||

console.log(`Uploading file: ${file.name}`);

|

||||

|

||||

|

||||

try {

|

||||

const response = await fetch('/documents/upload', {

|

||||

method: 'POST',

|

||||

@@ -346,30 +346,30 @@

|

||||

},

|

||||

body: formData

|

||||

});

|

||||

|

||||

|

||||

console.log('Response status:', response.status);

|

||||

|

||||

|

||||

if (!response.ok) {

|

||||

const errorData = await response.json();

|

||||

throw new Error(`Upload failed: ${errorData.detail || response.statusText}`);

|

||||

}

|

||||

|

||||

|

||||

// Update progress

|

||||

const progress = ((i + 1) / files.length) * 100;

|

||||

progressBar.style.width = `${progress}%`;

|

||||

console.log(`Progress: ${progress}%`);

|

||||

|

||||

|

||||

} catch (error) {

|

||||

console.error('Upload error:', error);

|

||||

uploadStatus.innerHTML = `<span class="text-red-500">Error uploading ${file.name}: ${error.message}</span>`;

|

||||

return;

|

||||

}

|

||||

}

|

||||

|

||||

|

||||

// All files uploaded successfully

|

||||

uploadStatus.innerHTML = '<span class="text-green-500">All files uploaded successfully!</span>';

|

||||

progressBar.style.width = '100%';

|

||||

|

||||

|

||||

// Clear the file input and selection display

|

||||

setTimeout(() => {

|

||||

fileInput.value = '';

|

||||

@@ -377,7 +377,7 @@

|

||||

uploadProgress.classList.add('hidden');

|

||||

progressBar.style.width = '0%';

|

||||

}, 3000);

|

||||

|

||||

|

||||

} catch (error) {

|

||||

console.error('General upload error:', error);

|

||||

uploadStatus.innerHTML = `<span class="text-red-500">Upload failed: ${error.message}</span>`;

|

||||

@@ -391,20 +391,20 @@

|

||||

button.className = 'copy-button absolute top-1 right-1 p-1 bg-slate-700/80 hover:bg-slate-700 text-white rounded text-xs opacity-0 group-hover:opacity-100 transition-opacity';

|

||||

button.innerHTML = `

|

||||

<svg class="w-3 h-3" fill="none" stroke="currentColor" viewBox="0 0 24 24">

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

d="M8 16H6a2 2 0 01-2-2V6a2 2 0 012-2h8a2 2 0 012 2v2m-6 12h8a2 2 0 002-2v-8a2 2 0 00-2-2h-8a2 2 0 00-2 2v8a2 2 0 002 2z" />

|

||||

</svg>

|

||||

`;

|

||||

|

||||

|

||||

pre.style.position = 'relative';

|

||||

pre.classList.add('group');

|

||||

|

||||

|

||||

button.addEventListener('click', async () => {

|

||||

const codeElement = pre.querySelector('code');

|

||||

if (!codeElement) return;

|

||||

|

||||

|

||||

const text = codeElement.textContent;

|

||||

|

||||

|

||||

try {

|

||||

// First try using the Clipboard API

|

||||

if (navigator.clipboard && window.isSecureContext) {

|

||||

@@ -428,29 +428,29 @@

|

||||

return;

|

||||

}

|

||||

}

|

||||

|

||||

|

||||

// Show success feedback

|

||||

button.innerHTML = `

|

||||

<svg class="w-3 h-3" fill="none" stroke="currentColor" viewBox="0 0 24 24">

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2" d="M5 13l4 4L19 7" />

|

||||

</svg>

|

||||

`;

|

||||

|

||||

|

||||

// Reset button after 2 seconds

|

||||

setTimeout(() => {

|

||||

button.innerHTML = `

|

||||

<svg class="w-3 h-3" fill="none" stroke="currentColor" viewBox="0 0 24 24">

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

d="M8 16H6a2 2 0 01-2-2V6a2 2 0 012-2h8a2 2 0 012 2v2m-6 12h8a2 2 0 002-2v-8a2 2 0 00-2-2h-8a2 2 0 00-2 2v8a2 2 0 002 2z" />

|

||||

</svg>

|

||||

`;

|

||||

}, 2000);

|

||||

|

||||

|

||||

} catch (err) {

|

||||

console.error('Copy failed:', err);

|

||||

}

|

||||

});

|

||||

|

||||

|

||||

pre.appendChild(button);

|

||||

}

|

||||

});

|

||||

@@ -460,12 +460,12 @@

|

||||

queryForm.addEventListener('submit', async (e) => {

|

||||

e.preventDefault();

|

||||

const query = queryInput.value.trim();

|

||||

|

||||

|

||||

if (!query) {

|

||||

queryResponse.innerHTML = '<p class="text-red-500">Please enter a query</p>';

|

||||

return;

|

||||

}

|

||||

|

||||

|

||||

// Show loading state

|

||||

queryResponse.innerHTML = `

|

||||

<div class="animate-pulse">

|

||||

@@ -478,10 +478,10 @@

|

||||

</div>

|

||||

</div>

|

||||

`;

|

||||

|

||||

|

||||

try {

|

||||

console.log('Sending query:', query);

|

||||

|

||||

|

||||

const response = await fetch('/query', {

|

||||

method: 'POST',

|

||||

headers: {

|

||||

@@ -490,17 +490,17 @@

|

||||

},

|

||||

body: JSON.stringify({ query })

|

||||

});

|

||||

|

||||

|

||||

console.log('Response status:', response.status);

|

||||

|

||||

|

||||

if (!response.ok) {

|

||||

const errorData = await response.json();

|

||||

throw new Error(`Query failed: ${errorData.detail || response.statusText}`);

|

||||

}

|

||||

|

||||

|

||||

const data = await response.json();

|

||||

console.log('Query response:', data);

|

||||

|

||||

|

||||

// Format and display the response

|

||||

if (data.response) {

|

||||

const formattedResponse = marked.parse(data.response, {

|

||||

@@ -510,19 +510,19 @@

|

||||

}

|

||||

return code;

|

||||

}

|

||||

});

|

||||

});

|

||||

queryResponse.innerHTML = `

|

||||

<div class="prose prose-slate max-w-none">

|

||||

${formattedResponse}

|

||||

</div>

|

||||

`;

|

||||

|

||||

|

||||

// Re-trigger Prism highlighting

|

||||

Prism.highlightAllUnder(queryResponse);

|

||||

} else {

|

||||

queryResponse.innerHTML = '<p class="text-slate-600">No response data received</p>';

|

||||

}

|

||||

|

||||

|

||||

// Call this after loading markdown content

|

||||

addCopyButtons();

|

||||

// Optional: Add sources if available

|

||||

@@ -534,7 +534,7 @@

|

||||

${data.sources.map(source => `

|

||||

<li class="flex items-center space-x-2">

|

||||

<svg class="w-4 h-4 text-slate-400" fill="none" stroke="currentColor" viewBox="0 0 24 24">

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

d="M9 12h6m-6 4h6m2 5H7a2 2 0 01-2-2V5a2 2 0 012-2h5.586a1 1 0 01.707.293l5.414 5.414a1 1 0 01.293.707V19a2 2 0 01-2 2z" />

|

||||

</svg>

|

||||

<span>${source}</span>

|

||||

@@ -545,7 +545,7 @@

|

||||

`;

|

||||

queryResponse.insertAdjacentHTML('beforeend', sourcesHtml);

|

||||

}

|

||||

|

||||

|

||||

} catch (error) {

|

||||

console.error('Query error:', error);

|

||||

queryResponse.innerHTML = `

|

||||

@@ -555,14 +555,14 @@

|

||||

</div>

|

||||

`;

|

||||

}

|

||||

|

||||

|

||||

// Optional: Add a copy button for the response

|

||||

const copyButton = document.createElement('button');

|

||||

copyButton.className = 'mt-4 px-3 py-1 text-sm text-slate-600 hover:text-slate-800 border border-slate-300 rounded hover:bg-slate-50 transition-colors';

|

||||

copyButton.innerHTML = `

|

||||

<span class="flex items-center space-x-1">

|

||||

<svg class="w-4 h-4" fill="none" stroke="currentColor" viewBox="0 0 24 24">

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

d="M8 16H6a2 2 0 01-2-2V6a2 2 0 012-2h8a2 2 0 012 2v2m-6 12h8a2 2 0 002-2v-8a2 2 0 00-2-2h-8a2 2 0 00-2 2v8a2 2 0 002 2z" />

|

||||

</svg>

|

||||

<span>Copy Response</span>

|

||||

@@ -583,7 +583,7 @@

|

||||

copyButton.innerHTML = `

|

||||

<span class="flex items-center space-x-1">

|

||||

<svg class="w-4 h-4" fill="none" stroke="currentColor" viewBox="0 0 24 24">

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

<path stroke-linecap="round" stroke-linejoin="round" stroke-width="2"

|

||||

d="M8 16H6a2 2 0 01-2-2V6a2 2 0 012-2h8a2 2 0 012 2v2m-6 12h8a2 2 0 002-2v-8a2 2 0 00-2-2h-8a2 2 0 00-2 2v8a2 2 0 002 2z" />

|

||||

</svg>

|

||||

<span>Copy Response</span>

|

||||

@@ -620,6 +620,9 @@

|

||||

|

||||

if (response.ok) {

|

||||

const data = await response.json();

|

||||

// Convert indexed_files to array if it's not already

|

||||

const files = Array.isArray(data.indexed_files) ? data.indexed_files : data.indexed_files.split(',');

|

||||

|

||||

healthInfo.innerHTML = `

|

||||

<div class="space-y-4">

|

||||

<div class="flex items-center">

|

||||

@@ -629,7 +632,14 @@

|

||||

<div class="space-y-2">

|

||||

<p><span class="font-medium">Working Directory:</span> ${data.working_directory}</p>

|

||||

<p><span class="font-medium">Input Directory:</span> ${data.input_directory}</p>

|

||||

<p><span class="font-medium">Indexed Files:</span> ${data.indexed_files}</p>

|

||||

<div>

|

||||

<p><span class="font-medium">Indexed Files:</span> <span class="text-slate-500">(${files.length} files)</span></p>

|

||||

<div class="mt-2 max-h-40 overflow-y-auto border rounded p-2">

|

||||

<ul class="space-y-1 text-sm">

|

||||

${files.map(file => `<li class="text-slate-600">${file}</li>`).join('')}

|

||||

</ul>

|

||||

</div>

|

||||

</div>

|

||||

</div>

|

||||

<div class="border-t pt-4">

|

||||

<h4 class="font-medium mb-2">Configuration</h4>

|

||||

@@ -650,6 +660,7 @@

|

||||

}

|

||||

});

|

||||

|

||||

|

||||

$('#closeHealthBtn').addEventListener('click', () => {

|

||||

healthModal.classList.add('hidden');

|

||||

});

|

||||

|

||||

58

lightrag/exceptions.py

Normal file

58

lightrag/exceptions.py

Normal file

@@ -0,0 +1,58 @@

|

||||

import httpx

|

||||

from typing import Literal

|

||||

|

||||

|

||||

class APIStatusError(Exception):

|

||||

"""Raised when an API response has a status code of 4xx or 5xx."""

|

||||

|

||||

response: httpx.Response

|

||||

status_code: int

|

||||

request_id: str | None

|

||||

|

||||

def __init__(

|

||||

self, message: str, *, response: httpx.Response, body: object | None

|

||||

) -> None:

|

||||

super().__init__(message, response.request, body=body)

|

||||

self.response = response

|

||||

self.status_code = response.status_code

|

||||

self.request_id = response.headers.get("x-request-id")

|

||||

|

||||

|

||||

class APIConnectionError(Exception):

|

||||

def __init__(

|

||||

self, *, message: str = "Connection error.", request: httpx.Request

|

||||

) -> None:

|

||||

super().__init__(message, request, body=None)

|

||||

|

||||

|

||||

class BadRequestError(APIStatusError):

|

||||

status_code: Literal[400] = 400 # pyright: ignore[reportIncompatibleVariableOverride]

|

||||

|

||||

|

||||

class AuthenticationError(APIStatusError):

|

||||

status_code: Literal[401] = 401 # pyright: ignore[reportIncompatibleVariableOverride]

|

||||

|

||||

|

||||

class PermissionDeniedError(APIStatusError):

|

||||

status_code: Literal[403] = 403 # pyright: ignore[reportIncompatibleVariableOverride]

|

||||

|

||||

|

||||